Alignment of XIXth century cadasters: Difference between revisions

| Line 326: | Line 326: | ||

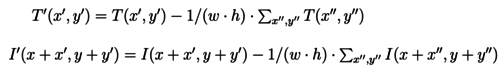

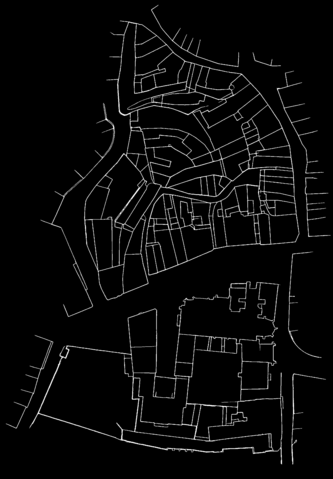

La Rochelle fortifications' inner cadastres from 1811 have been selected to assess the usability and performances of the tool. | La Rochelle fortifications' inner cadastres from 1811 have been selected to assess the usability and performances of the tool. | ||

In order to reduce the noise on the images, lines have been extracted from the cadastres (see [[#| here]]). | In order to reduce the noise on the images, lines have been extracted from the cadastres (see [[#Lines_detection_process| here]]). | ||

{| class="wikitable" style="margin-left: auto; margin-right: auto; border: none;" | {| class="wikitable" style="margin-left: auto; margin-right: auto; border: none;" | ||

Revision as of 17:43, 21 December 2021

Introduction

TODO: Examples of references (APA style)[1]. [ ] update GitHub links after final push

About a thousand of French napoleonian cadasters have been scanned and need from now to be aligned. A lot of different cities are included in this catalogue as La Rochelle, Bordeaux, Lyon, Lille, Le Havre and also cities that are no longer under French juridiction as Rotterdam.

Similarly to the work that had been done by the Venice Time Machine project, the idea is to attach every maps from a cadaster in order to get a single map of each city.

The main challenge for this project is the automatisation of this process, despite all inconsistencies in the maps, in terms of scale, orientation or conventions. Even if the instructions for the realisation of the maps were quite strict, some differences might last, for example in the scale, if there were nothing to show in some areas, or maps are not always oriented top-north (which is even not always indicated).

Deliverables

- [ ] Tool - [ ] rectification - [ ] Alignment - [ ] JSON generation - [ ] reconstruction of covered areas - [ ] PoC: La Rochelle downtown

This project provides an exploration of possible automation for aligning cadastres, as well as the development of an interface (in the form of a Jupyter Notebook) to supervise this task.

Project setup

Methods exploration on Berney's cadaster

For the primary research of methods to reattach cadastral maps, the so called cadastre Berney from Lausanne (1827) has been used, as long as the ground truth for this particular case is known and lot of processing steps (as lines and classes predictions) have already been made. The first exercise has been made on the two first maps, using the lines prediction files. The quandary was to be able to detect the common parts of these two maps, in this case the Rue Pépinet and the top of Rue du petit chêne. Many different methods have been tested for that task, mainly with help of the openCV python library. The researches have focused on scale invariant feature transform (SIFT), General Hough Transform (GHT)[2] and Template Matching (TM).

Template matching principle

Template matching is an OpenCV[1] function provided under the name cv.matchTemplate(). It takes as an imput a large scale image and smaller one, called template, that it will try to find in the first image. Concretely, the function slides the template through the initial image and for each position, gives a score of closeness. Note that the template always fully overlap the image, therefore the template must be fully included in the image. The function gives then as an output a matrix with score for each position. It then easy to find the position of the best score.

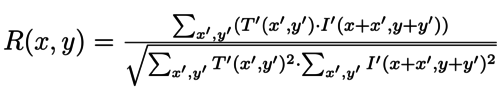

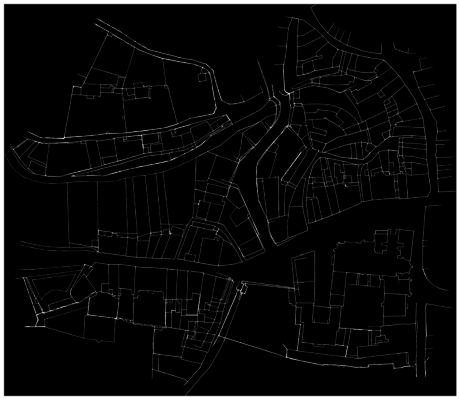

The function provides several ways to compute that score. The one used for this project is the so called TM_CCOEFF_NORMED, defined as :

where

and where T and I refer to pixels of the template, respectively initial, images, and finally w and h are width, respectively height, of the template.

This function is known to have better results on greyscale images. It is therefore a wise decision to apply it on the lines detected files.

First reattachment of two cadastral maps

The method that gave the most satisfying results (in terms of final output and computation time) was the template matching. The strategy is to cut a template in one of the maps and find the best match in the other one. Attaching the two maps together is then an almost straightforeward task, as long as the lines prediction files are only black (0) or white (255) pixels, and the final result is just the sum of the two of them adapted with the best matching position.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur. Excepteur sint occaecat cupidatat non proident, sunt in culpa qui officia deserunt mollit anim id est laborum.

Challenges Identification / Problem statement

[ ] Template extraction [ ] Orientation/scale [ ] Growth [ ] Good coherence metric, aligned with human understanding

Organisation

[ ] merge

Timeline summary of first steps

| Matching method | Template extraction | Growth | |

|---|---|---|---|

| Week 4 | SIFT | - | 1 to 1 |

| Week 5 | SIFT and GHT | Manual | 1 to 1 |

| Week 6 | GHT and TM | Manual | semi-automated 1 to 1 |

| Week 7 | GHT and TM | Manual | semi-automated 1 to 1 |

| Week 8 | TM | Manual and along lines | semi-automated 1 to 1 |

| Week 9 | TM | Manual and along lines | 1 to 1 or N |

| Week 10 | TM | Manual and along lines | 1 to 1 or N |

Final milestones of semester

| Objective | |

|---|---|

| Week 11 | Extract lines on a first set of cadastral maps (La Rochelle or Bordeaux) & manage pipeline |

| Week 12 | Test pipeline on the new extracted lines files |

| Week 13 | Adapt our model or extend it on other cities |

| Week 14 | Final presentation |

Automation process

In order to automate the alignment task, efforts where put in three of the main challenges identified. A pipeline was then built to automatically extract templates, take into account variations in scales and orientations and iteratively spread over adjacent cadastres to cover large areas. However the incapacity to meet the fourth challenge, defining a semantically coherent metric, hindered the generalisation of this approach and led to mixed results.

This exploration was undertook on the lines pre-extracted from Berney's 1827 cadastres of Lausanne.

Automatic template extraction

In cadastres, the overlapping areas are situated on the borders of the maps. Hence, the template extraction process was automated to extract templates around most excentric points along given lines that still coded for a line.

To do so, an imaginary path parametrised by an angle is drawn from the center of the cadastre to its edge. Pixels values along this path are extracted and used to retrieve the coordinates of the last non-zero pixel value (ie. extracted line on the cadastre). A template can then be extracted around this point.

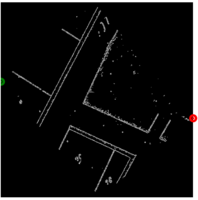

The function automating this task takes an integer N as argument that defines the number of templates to be extracted. Templates are then extracted along paths parametrised by the linear division of the circle in N pieces (see extract_templates). Examples are shown in the left hand side plot present in the figure on the right.

Nevertheless these plot mainly highlight the fragility of the matching method. Indeed they underline that the TM score doesn't align with the human understanding of the problem. Moreover, as TM is highly dependent on the templates, this approach is highly dependent on the chosen number of extracted templates N. For example here extracting 9 templates resulted in a coherent best match but extracting one less template yielded wrong results.

Orientation and scale integration

One of the factor deteriorating the quality of the matches are the slight variations of orientation. Thus, in order to limit this influence (and potentially to prepare to non-oriented cadastres) the matching process was made orientation invariant (over a given discrete range). The same procedure was applied to scales as Lausanne's cadastres relative scales are mixed between 1:1 and 1:2 (or inversly 1:0.5). The matching process was then a grid-search over the specified angles and scales, with scores obtained by matching the extracted template on the modified (rotated and rescaled) target cadastre.

However, beside increasing the computational cost, multiplying the number of parameters also heightens the chance of semantically incoherent match to get the best score (as there is only one, or few, good match and all others are deceiving).

NB.: at this state of the project, this method was achieved by rescaling and rotating the whole target cadastre. This approach is largely suboptimal and computationally very expensive, it was changed afterwards (as described here).

Peripheral growth

Both of the previously stated methods (Automatic template extraction and scale and orientation invariant TM) were then combined in an algorithm anchoring iteratively adjacents cadastres to the already integrated ones to spread the covered area. The list of adjacent cadastres were manually encoded and the parameters manually fine-tuned to reach meaningful results over several cadastres, see for example the left hand-side figure below. However this method demonstrated poor reliability and generalisation power, as illustrated on the right hand-side. There template 006 is wrongly matched on 008 and any additional consideration on this cadastre would be meaningless and detrimental to the general workflow.

- Iterative growth with successive anchors 001, 009, 010 and 008. Taking into account slight variations in orientation and two scales.

Limitations

If automated, this approach still exhibited major drawbacks. First, it is computationally expensive. Plus, it is highly non-stable as it depends on the very sensitive TM algorithm. This matching method often produces semantically wrong results, which is even more true in less dense (or rural) areas. Moreover, poor matches cause dramatic error propagation. Finally, it is very time-consuming as it requires extensive manual fine-tuning of the parameters to reach meaningful results, even over reduced areas.

These issues inhibit any possibility of generalisation and complete automation. Nonetheless, most of these problems are believed to be, at least partly, solvable by determining a better metric to render the quality of the matches. A measure closer to human understanding could discard semantically wrong matches to avoid errors to plague the results. It would also ease the optimisation of the parameters, that could then be handled automatically through Machine Learning algorithms. Such metric could also take the form of an additional classifier based on more heuristics or directly be integrated by the machine through Deep Learning.

Facing the incapacity to define a convincing metric, we decided to shift paradigm and directly involve human understanding in the loop by developing a tool to supervise the alignment task.

- [x] Trials

- [x] Automatic template extraction

- [x] Adjacent cadastres considerations: iterative growth

- [x] Taking into account: both orientation and scale

- [x] Low computational Efficiency

- [x] Necessity to fine-tune manually parameters

- [x] due to the lack of a metric closer to human understanding

- [x] Sometimes poor results (eg. less dense areas, etc.)

- [ ] Too fragile method ?

- [ ] Leads for a better metric

- [x] And still time consuming and need close supervision => change of paradigm

AlignmentTool

[x] Jupyter Innotater

[x] Rectifying

[x] rotation

[x] name

[x] NOT IMPLEMENTED: scale

[x] Matching process

[x] template selection

[x] target selection

[x] orientation dependent or not

[ ] Output = "raw" network

[x] Rebuilding

[x] homographies

[x] pairwise visualisation

[x] area growth

[x] new network

[x] inverse homographies included

[x] shortest path

The AlignmentTool was developed to offer a highly supervised method to perform the cadastres' alignment.

The backend processes are mainly handled through OpenCv[1], the data structure is managed with NetworkX[3], and the Notebook interface relies on jupyter-innotater[4]. The architecture and proceeding of each of these components are detailed below. Finally, the results of the proof of concept undertook on La Rochelle's Napoleonic cadastres from 1811 within Ferry's rampart are described and illustrated.

Backbone

The development of this tool compelled us to slightly revise the conceptual and theoretical framework for the matching and composition processes. The three major steps consisting in homologous points retrieval, pair-wise transformations computation and propagation are described in this section.

Matching

The matching model aims at finding two pairs of homologous points in the anchor and target cadastres (see get_target_homologous). It relies on TM (described above). On the anchor cadastres the tagged positions are the top-left and bottom-right corners of the extracted template. On the target one, the corresponding points are retrieved from the best match found.

If both of the cadastres share the same orientation (ie. matches are evaluated without taking into account additional rotations), then the pixel associated with the top-left corner of the template corresponds to the location with the highest value computed through TM and the pixel associated with the bottom-right corner is given by adding the template width and height.

If both of the cadastres don't share the same orientation, this task requires slightly more efforts. This procedure iteratively rotates the template, resulting in images of different shapes filled with void, and performs TM using these rotated templates (see orientation_matching). Thus, the coordinates of the best match will correspond to the top-left corner of the best rotated template, which is no longer in line with the top-right corner of the extracted template. The new pixel coordinates corresponding to the orignial template top-left and bottom-right corners are retrieved by artificially rotating the original template and computing the displacement of specified points (see shift_template).

An example of points tracking in the rotated templates is shown on the figure on the right. The figure below displays the results obtained through this method on a pair of non-aligned cadastres.

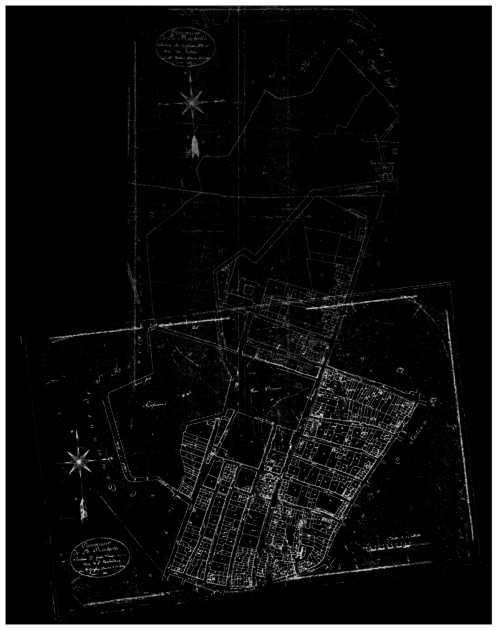

Homography

Homographies are transformation matrices (3 by 3), widely used in computer vision, mapping points in one image to corresponding ones in another image. In the context of this project, homographies are restricted to Euclidean transformations (allowing rotations and translations but preserving the distance between every pair of points). However, as the rest of the implementation is more general, slight modifications of the homographies' computation could enable the intergration of more complex transformations (eg. affine or projective) within the pipeline.

The pairs of homologous points retrieved through the matching process allows to compute the corresponding homography between the anchor and target cadastres. On both images the pair of coordinates define a vector from the top right to the bottom left tags. As they are considered to refer to the same geographic entity, the cadastres can be re-oriented (relatively to one another) by calculating the angle α between these vectors. The translations in 𝑥 and 𝓎 are computed by comparing points corresponding to the top left corner in the anchor image and target one (after re-orientation). The homography matrix is then given by:

This process is encoded in the function vector_alignment. It allows to turn the matches characterised by two pairs of homologous points into a transformation matrix, which can be applied on the target cadastre to obtain its composition with its anchor through warpTwoImages based on cv2.warpPerspective.

Propagation

Homographies demonstrate convenient properties that allow to propagate pairwise homographies in order to reconstruct larger areas.

- Inversion — If H1,2 is known, then H2,1 is given by the inverse of H1,2

Allows to get rid of the directionality of the graph - Composition — If H1,2 and H2,3 are known, then H1,3 is given by the product of H1,2 and H2,3

Allows to directly link each connected nodes to each others

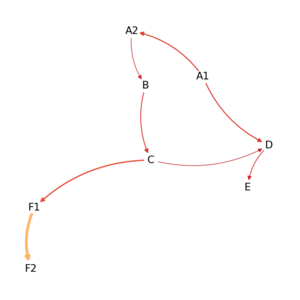

Thus, taking benefits of the network structure of the data (descibed below), the pairwise homographies can be operated to recover whole areas.

This procedure is done by building a new graph centered on a selected node, and linked to every node that was connected to it in the original network (without notion of directionality). All other nodes are only connected (as targets) to this central node.

Each one of the edges is characterised by an homography between the central node (anchor) and the connected component (target). These homographies are computed as the product of pair-wise homographies along the shortest path linking the selected central node to the target in the original graph (see buildCenteredNetwork).

The two images below show an example of cadastres composition, either using direct match (left) or homographies propagation along a path in the network (right). One can see that the quality of the composition is better in the direct match configuration, yet the propagation still provides satisfying results considering that it encompasses the proliferation of errors along four matches.

- Alignment of cadastres with direct edge or through propagation.

Network structure

The matches (when selected by the user) are collected in a directed graph. The nodes are the cadastres (or their labels) and the edges link the anchors (cadastres within which templates have been extracted) to their targets (cadastres matched). The edges store information about the matches between anchors and targets: their score and informations related to the matched positions (top left and bottom right corners of the extracted template in the anchor and corresponding positions in the target). An exemple of a possible realisation of such graph (for La Rochelle, 1811) is shown below.

These graph can be saved in JSON format with the following generic structure:

{

"directed": true,

"multigraph": false,

"graph": {},

"nodes": [{

"h": int — height of the corresponding image,

"w": int — width of the corresponding image,

"label": str — name

}, {

"h": int — height of the corresponding image,

"w": int — width of the corresponding image,

"label": str — name

}],

"match": [{

"score": float — score of the template matching process,

"anchor_tl": tuple: two int — coordinate of the template top left corner on the anchor,

"anchor_br": tuple: two int — coordinate of the template bottom right corner on the anchor,

"target_tl": tuple: two int — coordinate corresponding to anchor_tl on target,

"target_br": tuple: two int — coordinate corresponding to anchor_br on target,

"anchor": str — name of the anchor cadastre\node,

"target": str — name of the target cadastre\node

}]

}

User guide

The AlignemntTool service is provided as a jupyter notebook[5]. It offers easily understandable ways to preprocess, match and recompose the cadastres, and requires only low computational literacy.

The different steps are described in the notebook to guide the user during its use. Few considerations about the functionalities of the tool and the way they are meant to be used are reported in this section.

Preprocessing

AlignmentTool allows to preprocess the cadastres in two main ways. They can be reoriented using the bearing arrows potentially drawn on the cadastres, and renamed, which can be very convenient for further uses (eg. referring to them directly by their label during the matching process). Yet, in the context of this project the rescaling step was not included in the preprocessing pipeline. It could however be added with little efforts based on already developed functions.

These modifications can then be saved. Plus, edges can be computed through Canny edge detection[6] in order to provide a coarse, but quick, denoising of the images. This can be used as a low-cost line detector.

Matching

The matching process (illustrated on the matching flowchart on the right) takes the form of a constant dialog between the user and the machine. First the user needs to establish discussion by entering the labels of the anchor and target cadastres. Both of them will then be displayed in the notebook. The anchor will lie on the left-hand side. The user must draw the bounding-box around the template to be used for TM on this image. The target cadastre is then displayed on the right-hand side and the user can select the area within which the TM process should occur. This selected area should be large enough to include completely the template and all its rotated version). A boolean parameter also allows to disable the rotations of the templates if the cadastres have already been correctly oriented. The best match found through TM is then displayed as the composition of both of the cadastres. The user must evaluate this match and tell to the algorithm if the match is satisfying, thus should be stored, or if it has to be discarded. This is an iterative process meant to be realised until completion of the studied area.

Visualisation

The results can be visualised within the notebook, either by printing the structure of the graph built during the process or, more graphically, by displaying the composition of a pair or more cadastres. Note that if several representations of the cadastres are available (eg. scans, edges, detected lines, etc.) all of them can be used by adjusting one parameter.

Saving and loading

Finally two functions provides handy ways to save/load the graphs obtained through the process in/from JSON format. The JSON as the architecture described above.

Case Study: La Rochelle 1811

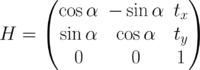

La Rochelle fortifications' inner cadastres from 1811 have been selected to assess the usability and performances of the tool. In order to reduce the noise on the images, lines have been extracted from the cadastres (see here).

| Total | ||||||||

|---|---|---|---|---|---|---|---|---|

| Steps | A2→A1 | A2→B | B→C | A1→D | E→D | C→F2 | F1→F2 | |

| Attempts | ||||||||

| Time |

[ ] Describe the different steps [ ] Time the process [ ] Count the number of attempts before success for each match

Lines detection process

At this step of the project, the only data available were the digitised cadasters themselves, but no process such as lines or class detection had been done on them. As mentioned previously, our method uses the lines detection files to find homologous points. It was therefore needed to process the cadasters we wanted to reattach. This task was done thanks to pretrained models provided by the teaching assistants.

dhSegment

dhSegment[7] presents itself as a tool for Historical Document Processing. It can be used to recognise several types of elements in different digitised document types. It is based on a CNN-architecture.

Procedure

The first step was to slice each map in many parts. Indeed, because of their size, the algorithm was not able to process them all at once. Therefore, the maps were cut in 3x3 parts to reduce the computational cost. Each of these slices was then insert in the whole lines detection pipeline. Finally, the slices were reattached together (a much simpler task than reattaching different maps, as long as the slicing method was kept in memory and it was only needed to invert it).

Discussion and limitations

- [ ] Lack of convincing metric / purely qualitative results - [ ] Failing to automate the task - [ ] No overlap between slices before line detection (to avoid border effects) - [ ] No preprossesing of maps (ie cut the non-map parts)

Why doesn't it work ?

Future Work

- [ ] Tool roadmap - [ ] Integration of other matching methods - [ ] Integration of more automation (adjacent matching) - [ ] improve UX - [ ] Use tool annotation (also discarded matches) to learn better metric ? - [ ] Taking into account matches from all adjacent cadastres and recursive adjustments - [ ] eg. Bundle Adjustment[8] - [ ] Automation - [ ] more heuristic and domain knowledge — in traditional ML/CV methods - [ ] investigate "deeper" approaches => add some literature: eg. [9] [10] - [ ] Alignment on Openstreetmap

References

- ↑ 1.0 1.1 1.2 Bradski, G. (2000). The OpenCV Library. Dr. Dobb's Journal of Software Tools. User site: https://opencv.org

- ↑ Ballard, D. H. (1981). Generalizing the Hough transform to detect arbitrary shapes. In Pattern Recognition (Vol. 13, Issue 2, pp. 111–122). Elsevier BV. doi:10.1016/0031-3203(81)90009-1

- ↑ Aric A. Hagberg, Daniel A. Schult, & Pieter J. Swart (2008). Exploring Network Structure, Dynamics, and Function using NetworkX. In Proceedings of the 7th Python in Science Conference (pp. 11 - 15). Documentation: https://networkx.org/documentation/stable

- ↑ Lester, D (danlester). (2021). jupyter-innotater. GitHub Repository: https://github.com/ideonate/jupyter-innotater.

- ↑ Kluyver, T., Ragan-Kelley, B., Fernando Pérez, Granger, B., Bussonnier, M., Frederic, J., Willing, C. (2016). Jupyter Notebooks – a publishing format for reproducible computational workflows. In F. Loizides & B. Schmidt (Eds.), Positioning and Power in Academic Publishing: Players, Agents and Agendas (pp. 87–90). User site: https://jupyter.org

- ↑ Canny, J. (1986). A computational approach to edge detection. IEEE Transactions on Pattern Analysis and Machine Intelligence, (6), 679–698.

- ↑ Ares Oliveira, S., Seguin, B., Kaplan, F. (2018). dhSegment: A generic deep-learning approach for document segmentation. GitHub Repository: https://github.com/dhlab-epfl/dhSegment

- ↑ Brown, M., & Lowe, D. G. (2007). Automatic panoramic image stitching using invariant features. International journal of computer vision, 74(1), 59-73. doi:10.1007/s11263-006-0002-3

- ↑ Sun, K., Hu, Y., Song, J., & Zhu, Y. (2021). Aligning geographic entities from historical maps for building knowledge graphs. International Journal of Geographical Information Science, 35(10), 2078-2107. doi:10.1080/13658816.2020.1845702

- ↑ Duan, W., Chiang, Y.Y., Knoblock, C., Jain, V., Feldman, D., Uhl, J., & Leyk, S. (2017). Automatic Alignment of Geographic Features in Contemporary Vector Data and Historical Maps. In Proceedings of the 1st Workshop on Artificial Intelligence and Deep Learning for Geographic Knowledge Discovery (pp. 45–54). Association for Computing Machinery. doi:10.1145/3149808.3149816