Virtual Louvre: Difference between revisions

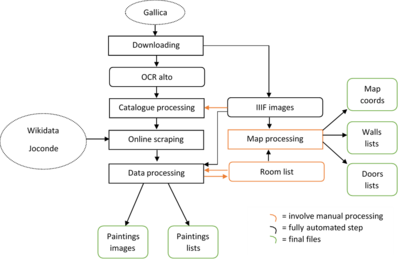

(Add a picture of pipeline) |

No edit summary |

||

| Line 6: | Line 6: | ||

= Motivation = | = Motivation = | ||

Wandering between the Greek statues and the multi-centenary paintings of the Louvre, we might be tempted to forget that museum themselves have a history. Displays are not immutable. With the digitalization, museum have a new opportunity to made their collections available. But a virtual collection is not a virtual museum. A museum, through its rooms and the links made between the artworks by disposition choices, is a media, an experience. Therefore, allow the user to walk virtually through the rooms of the Louvre and see which paintings were in each room is the best way to make sense of the information that the catalogue offers to us. | Wandering between the Greek statues and the multi-centenary paintings of the Louvre, we might be tempted to forget that museum themselves have a history. Displays are not immutable. With the digitalization, museum have a new opportunity to made their collections available. But a virtual collection is not a virtual museum. A museum, through its rooms and the links made between the artworks by disposition choices, is a media, an experience. Therefore, allow the user to walk virtually through the rooms of the Louvre and see which paintings were in each room is the best way to make sense of the information that the catalogue offers to us. | ||

= Historical introduction = | |||

/TODO | |||

= Description and evaluation of the methods = | = Description and evaluation of the methods = | ||

Revision as of 15:47, 13 December 2019

Abstract

The goal of this project is to allow anyone to feel a bit of the Louvre of 1923. Generated browsable rooms at scaled proportions will display some of the artworks that were here in 1923. The user will be able to walk through the differents rooms and to discover informations about the exposed paintings.

Data processing is done with python and makes extensive use of pandas, regex and web scraping. The virtualisation uses Unity3D.

Motivation

Wandering between the Greek statues and the multi-centenary paintings of the Louvre, we might be tempted to forget that museum themselves have a history. Displays are not immutable. With the digitalization, museum have a new opportunity to made their collections available. But a virtual collection is not a virtual museum. A museum, through its rooms and the links made between the artworks by disposition choices, is a media, an experience. Therefore, allow the user to walk virtually through the rooms of the Louvre and see which paintings were in each room is the best way to make sense of the information that the catalogue offers to us.

Historical introduction

/TODO

Description and evaluation of the methods

Formal description of the source

The catalogue is constituted of :

- A map of a part of the first floor of the Louvre, on a double page

- An introduction (not used in the project)

- A list of abbreviations.

- A list of paintings, grouped by national schools

- Labeled illustrations of some paintings, scattered through the list.

An item in the list has the form number author (lifespan) title position

- Number can be an index taken from the main catalogue range of unique ID or from collection-specific ranges. In the latter case, the number is indicated in parentheses. It can also be a star * or “S. N°” (without number).

- Author and lifespan works together as a block. Paintings are grouped by author. When a painting has the same author as the previous one, the block is replaced by a dash.

- Titles are not disambiguated nor universal. There are cases where multiple paintings have the same title and the same author. Some title does not match exactly the “official title” of the painting.

- Position are the name of the room in which the painting is. In some cases, they are followed by the cardinal direction of the wall on which the painting is. If the position is partially or totally the same as the previous position, the common part is replaced by a dash.

A labeled illustration is constituted of a black and white image of a painting and a caption indicating the number of the painting and the last name of the author, in capitals.

Data processing pipeline

Downloading

Alto OCR and IIIF images are directly downloaded from Gallica using the tool provided by the lab <ajouter lien>.

Evaluation

Alto OCR does not distinguish bold words, which would have been extremely useful in the following steps of processing. Also, it contains multiples errors that hinder the whole parsing process.

Catalogue processing

The first main step of the processing is to itemize the alto into entries. Words are seen as a long stream. An entry begin at word N if this word horizontal coordinate is on the left of a certain threshold, word N-1 horizontal coordinate is on the right of a certain threshold, and word N respect the format of a painting number or a page header or title. After the entries have been determined, non-artwork entries (void entries, page headers and illustration caption) are eliminated with simples rules. They are easily recognizable containing only capital letters and numbers, but OCR errors create exceptions that are not well handled.

The second main step is the processing of the entries themselves. The identification of the different elements of an entry is performed with regex rules. The regex method has the advantage over a CRF ML method of giving extremely predictable error, as the failures are all due to bad OCR or itemization errors. On the other hand, it handle very badly exceptions and the regex rules must be very carefully designed.

The process first identify the number, then tries to find a lifespan or a dash, deduce the author position from the lifespan’s if there is one, and finally tries to differentiate the title and the position. The position is predicted regarding to the presence of a roman number, a known position keyword or a dash. After that, the implicit positions and authors are merged with what is previously known. Authors are simply replaced where there is a dash, but partial positions are merged by replacing the last words of the known position by the words presents in the new position.

Evaluation

The itemization works well for standard cases, but does not handle exceptions. There are a few cases in which an artwork is not separated from a parasitic header or illustration caption, or when small artworks are not properly displayed in rows in the catalogue so remain sticked together. The differentiation between true artwork entries and parasitic entries has been calibrated to minimize the number of true artworks classified as parasites. Therefore, almost no true artwork is misclassified, but there is some trash kept in the list. 2709 entries are found.

There are two main point of failure in the identification of the part of an entry are finding the lifespan and the position.

- The lifespan is often in the classic format (year_of_birth-year_of_death) but some of them are a text summing up what is known about the period of life of the artist. The most common cause of failure is OCR. A lot of parentheses are missing and a lot of year numbers are wrongly identified as non-sensical series of letters. For exemple, (1810-1880) turned into i i S i o- i SSo)

- The position identification relies on keywords that have been manually gathered. Although they are as exhaustive as possible and include common OCR errors, there are still misclassifications. Most of the failures are due to bad entry itemization, where two or more real entries are concatenated in a single item.

The merging of the position is also error-prone, because of its naïve implementation. Some final so-called positions are impossible, meaning that they have been badly merged.

A last big issue with the part identification is that if the parsing fail for one entry, all the immediately following entries that share an author or a position will fail too. As a vast majority of authors are associated with multiple paintings, the most of the incomplete rows have failed because the failure of another row.

At the end of the process, 2254 out of 2709 entries are complete, which represent a ratio of 83%. It is not possible to quantify directly how many are correctly parsed, but a manual random check on 100 artworks suggests that more than 80% probably are (83 correct on 100 reviewed), so roughly 1800 artworks should be usable.

Online scraping

There is no database that contains most of the artworks of the catalogue, only various small websites that can retrieve some of them. Wikidata was chosen because of its completeness, ease to query and digital humanities friendly principles.

For each painting, a query is constructed with the last name and one of the first name of the author. Choosing only one firstname in the query is a tradeoff between finding wrong authors and not finding authors because their full name is not commonly known. Image URL and dimensions are then scraped from the html with BeautifulSoup. If there is no image URL, an attempt is made to get the joconde ID of the painting and find the right pop.culture.gouv.fr page.

Unsuccessful trials have been made to query directly the joconde government database and AKG image. They failed because no way was found to directly use HTTP queries on them.

Performance evaluation

690 images and 696 painting dimensions are found. 658 paintings share both informations.

The reasons for this weak result are that Wikidata is incomplete, that a single parsing error makes the query fail, and that the names of author or paintings not always match between the catalogue and the database. For example, Rembrandt is named Rembrandt Harmensz van Rijn in the catalogue but Wikidata only knows its last name.

On the other hand, as Wikidata never gives results that only partially match with the query, it is strongly reliable.

Even though, the result are not fully correct because of the lack of specificity of the titles in the catalogue. As some titles are common to several paintings of the same author, some images appears multiple times, meaning that at least one of them is obviously wrong. In general, there is never any guarantee that the image retrieved is the true one and not an homograph.

As even scraping Wikidata does not provide fully reliable informations, it was decided to give priority to quality over quantity and not perform google search to get images, which would have been even more inaccurate.

Data cleaning and processing

This part regroups multiple small steps performed in order to generate clean data usable in Unity.

Positions are first split into room and specific wall using a small regex. They are then matched to a manually established list of valid rooms. The list itself has been created based on the unclean rooms names. It associates every valid name with all its known variations.

Width and height of the paintings, authors and title are standardized (punctuation removal, common format).

Finally, the paintings are turn into JSON files according to their positions.

Evaluation

Because the list of valid rooms is manually created only with the unclean information given by the catalogue, wrong decisions could have been made when grouping the names, so paintings may have been attributed to the wrong room.

Text cleaning and standardizing present no particular pitfall.

Picture retrieval

Catalogue pictures are retrieved. Using again the alto, illustrations and corresponding caption are selected. Because of OCR errors, two attempts are made to find the right entry of an illustration. First only based on the number, second based on the number and the author. If exactly one match is found, the picture is cropped from the IIIF image using alto coordinates.

Pictures are also retrieved from the URLs using urllib.

Evaluation

Not all illustrations could be matched. Some failures were due to OCR errors on the author name or numbers (especially on the parentheses that distinguish the two series of IDs). Some were due to a catalogue conception fault: as the caption consist only of a number and a last name, all the unnumbered paintings from the same author are undistinguishable.

Combining URLs and the catalogue itself, 787 images are available, which is about a quarter of the 2709 entries detected.

Map processing

The processing is performed fully manually with the aid of tkinter and is based on the valid room list previously established. It consists of two parts.

The first is dedicated to the creation of the rooms themselves in Unity. Using a naïve homemade interface, the relative coordinates of the corners of each room and their doors are extracted by clicking on them on the map. For each room, walls and doors are stored in dedicated JSON files.

The second serves the displaying of a map indicating the current room in Unity. With a similar interface, the absolute position of the center of each room is extracted by clicking on it.

Performance evaluation

Not all valid rooms appears on the map. Some are known to belong to the Louvre. For example, two out of four sides of the Cour Carrée are missing on the map. On the other hand, some rooms name are probably OCR or merging artefacts. On the 108 “valid” rooms proposed, only 75 have been identified. Furthermore, the catalogue contains inconsistencies. Some rooms that are seemingly the same are given two different names, like III and Salle des Sept Cheminées or Salle des Meubles XVIIIe and Salle du Mobilier XVIIIe. All this considered, there is a non-negligible risk that wrong choices were made and that the rooms does not exactly correspond to the historical truth.

A conceptual limitation is that each room is defined individually with its set of doors. Therefore, there is only a connection between a door and its corresponding room, and not between the two faces of a same door in two different rooms.

Construction of the virtual Louvre

/TODO

Step X

Performance evaluation

Description of final interface

/TODO >100 words

Milestone

Schedule

| Progress | Artwork retrieval | Map processing | Virtualisation |

|---|---|---|---|

| Done | Parsing of the catalogue | Creation of a GUI | Generation of a room |

| Connection with wikidata | |||

| To be done | |||

| Connection with other databases | Use of the GUI | Painting and doors generation | |

| Retrieval of the catalogue pictures | Consistency of room names | User interface and camera | |

| Link everything together | |||

Tasks

Catalogue parsing

Around 2200 out of 2700 artworks have been parsed. Improvement is limited due to OCR.

Online database scraping

Scraping wikidata allowed us to get around 300 pictures of paintings (out of 2200). As no online database seems to be exhaustive enough, we need to multiply the sources to get as much pictures of paintings as possible and deal with inconsistencies across the different databases. We plan to introduce an index of confidence for the picture, and to use the picture of painting of the catalogue itself.

Map processing

A small GUI has been created using tkinter to allow us to perform room identification in a systematic way. We need to perform the manual extraction and labelling of the rooms. The consistency of the room names with the catalogue's one need to be ensured.

Room generation in unity

Instead of generating the whole Louvre in one go, we take advantage of the video game oriented features of Unity3D to create each room on their own and travel between them through "gates". We are taking as input a list with walls and generate it in unity. We choose to define each wall as an object with two points and an orientation (north, west etc). we still need to add the generation of the doors.

Painting generator

We have to create the paintings at the right size displaying the author and the description. And then attach it to the right wall.

User environment

Creation the player and the possibility to interact with the environment must be done.

Linking everything together

The whole pipeling is almost complete. We need to map the pythonic room and artworks representation to a Unity3D one and agree on a data storage structure.