Switzerland Road extraction from historical maps: Difference between revisions

| Line 172: | Line 172: | ||

|- | |- | ||

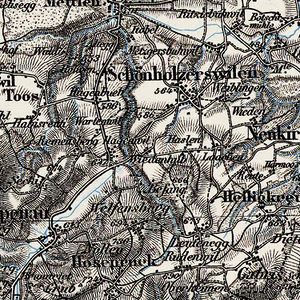

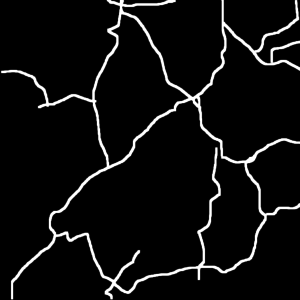

|[[File:Map predictions.jpg|thumb|center|300px|Figure 6: Map with highlighted predictions]] | |[[File:Map predictions.jpg|thumb|center|300px|Figure 6: Map with highlighted predictions]] | ||

|[[File:Example.jpg]] | |||

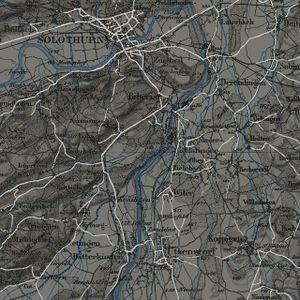

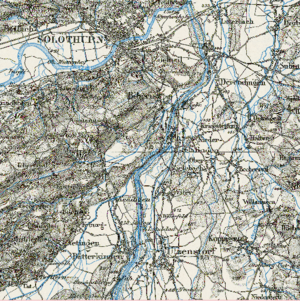

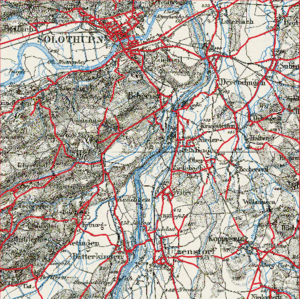

|[[File:Region.png|thumb|center|300px|Figure 7: Original map (target region)]] | |[[File:Region.png|thumb|center|300px|Figure 7: Original map (target region)]] | ||

|} | |} | ||

Revision as of 14:35, 22 December 2021

Introduction

Historical maps provide valuable information about spatial transformation of the landscape over time spans. This project, based on historical maps of Switzerland, is to vectorize road network and landcover and to visualize the transformation using a machine vision library developed at the DHLAB.

The main data source of this project is GeoVITe (Geodata Versatile Information Transfer environment),a browser-based access to geodata for research and teaching, operated by the Institute of Cartography and Geoinformation of ETH Zurich (IKG) since 2008.

Motivation

Historical maps contain rich information, which is helpful in urban planning, historical study, and various humanities research. Digitization of massive printed documents is a significant step before further research. However, most historical maps are scanned in rasterized graphical images. To conveniently use geographic data extracted from these maps in GIS software, vectorization is needed.

However, the vectorization process has always been a challenge due to manual painting. In this project, we try to use the dh-segmentation tool for automatic vectorization. With 60 high-resolution patches(5km*5km) for the training dataset, the model is tested on randomly selected patches and proposed to approximate idealised main roads of the Dufour map of the selected region -- Solothurn in Switzerland.

Description of the Deliverables

Github repository [1] with all materials necessary to demonstrate the results of applying the dhSegment to road extraction from historical maps, vectorization, skeletonization and georeferencing:

- data - folder with input data for the dhSegment tool, it contains 2 folders: 'images' (contains 60 jpeg patches from different parts of the Dufour map 1000 by 1000 pixels) and 'labels' (contains 60 png binary labels with road annotations for the mentioned patches). Images from this folder were used as a training dataset for dhSegment.

- images_predict - folder with 9 overlapping patches of the Dufour map. These patches represent a small region of Switzerland containing Solothurn. This region was not presented in the training dataset.

- img - folder that contains the results of road segmentation, skeletonization, vectorization and georeferencing for the selected testing dataset:

1) original_tiff_patch - folder with 9 original tiff patches downloaded from GeoVITe. They contain information about the geographic coordinates.

2) Final_line.dbf - one of the automatically generated files.

3) Final_line.points - file with Ground Control Points.

4) Final_line.shp - the final result of the vectorization: the vectorized historical map of Solothurn region

5) Final_line.shx - one of the automatically generated files.

6) Full_image.png - predictions of roads for the region combined from 9 testing patches (Solothurn).

7) Full_map_pred.png - predictions of roads highlighted in the original map of the region.

8) georeferenced_sk.tif - georeferenced vecorized map of predicted roads.

9) sk_Full_image.png - result after skeletonization of the Full_image.png.

Notebooks containing the main coding parts:

- dhSegment.ipynb.zip - compressed jupyter notebook with the results of road segmentation for images_predict. The code was taken from this repository: https://github.com/dhlab-epfl/dhSegment-torch and modified for the purpose of this project. You will find all additional necessary files in the mentioned repository. For additional instructions about the model please refer to https://dhsegment.readthedocs.io/en/latest/index.html.

- skeletonization.ipynb - jupyter notebook with code for skeletonization.

- vectorization.ipynb - jupyter notebook with code for vectorization.

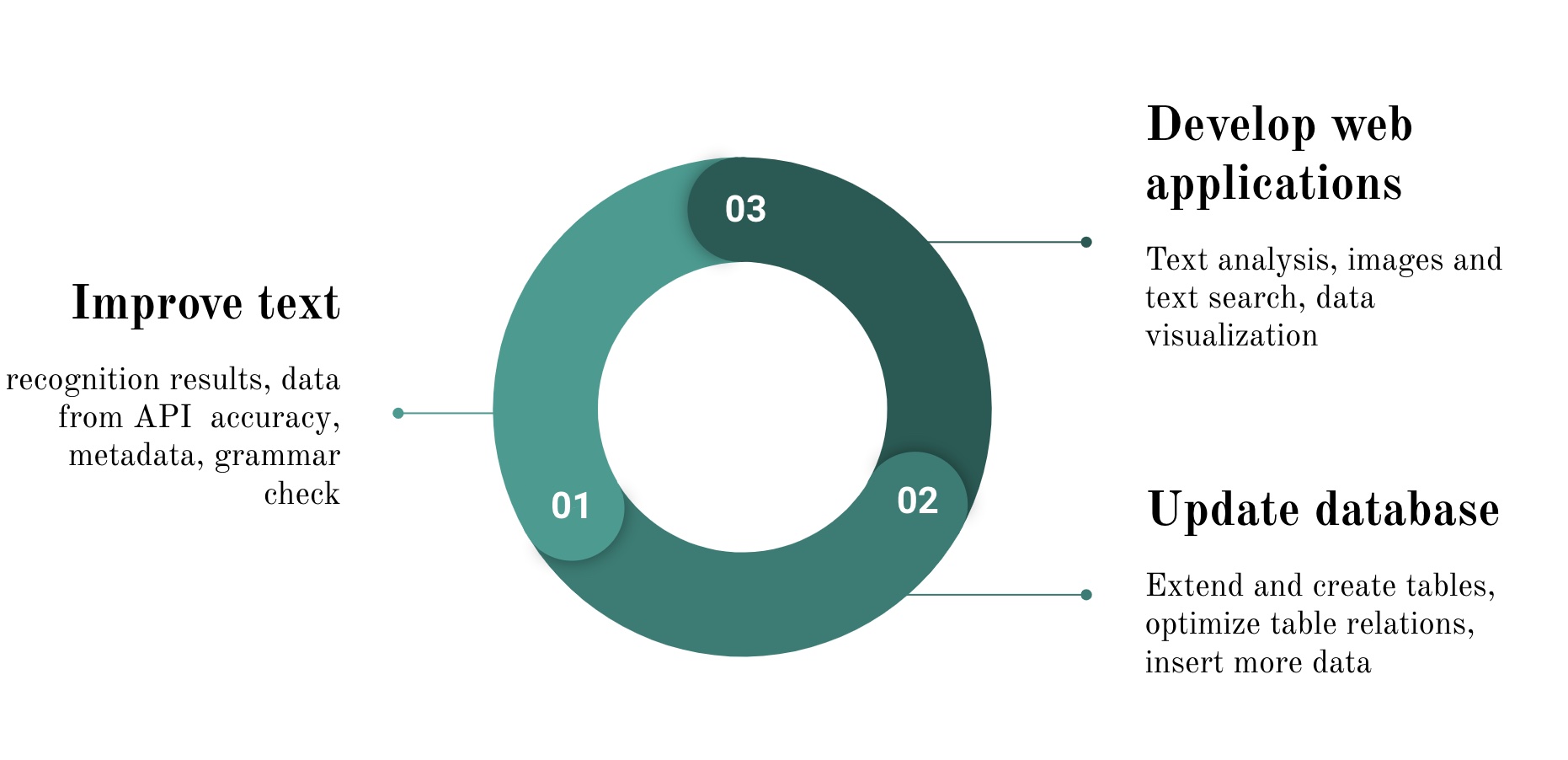

Plan and Milestones

Milestone 1:

- Choose the topic for the project, present the first ideas. Get familiar with the data provided.

- Define the final subject of the project. Find reliable sources of information. Get familiar with the dhSegment tool.

Milestone 2: (Now)

- Prepare a small dataset (60 samples) for training and testing with Convolutional Neural Network, main part of the dhSegment tool. This dataset should consist of small patches extracted from the Dufour Map of Switzerland, their versions in jpeg format and binary labels created in GIMP.

- Determine the way of downloading a huge amount of samples from GeoVITe.

- Prepare for the mid-term presentation and write the project plan and milestone.

- Try the dhSegment tool with the created dataset. Evaluate the results, modify the algorithm. Make conclusions about using this tool for road extraction, advantages and disadvantages of this approach.

Milestone 3:

- Download the final dataset automatically using the python script: patches with corresponding coordinates completely covering the selected region of Switzerland.

- Test the dhSegment tool on the final dataset.

Milestone 4:

- Get the vectorised map of roads with skeletonization in Python using OpenCV.

- Prepare a final presentation.

| Deadline | Task | Completion |

|---|---|---|

| By Week 4 |

|

✓ |

| By Week 6 |

|

✓ |

| By Week 8 |

|

✓ |

| By Week 10 |

|

✓ |

| By Week 12 |

|

✓ |

| By Week 14 |

|

✓ |

Methodology

Dataset:

- Dufour Map from GeoVITe

- The 1:100 000 Topographic Map of Switzerland was the first official series of maps that encompassed the whole of Switzerland. It was published in the period from 1845 to 1865 and thus coincides with the creation of the modern Swiss Confederation.

- Classification: Main roads

- Layer: Topographic Raster Maps - Historical Maps - Dufour Maps

- Coordinate system: CH1903/LV03

- Predefined Grids: 1:25000

- Patch Size: 1000 by 1000 pixels

- Selected Region: Solothurn(No.1127)

DhSegment:

A generic framework for historical document processing using Deep learning approach, created by Benoit Seguin and Sofia Ares Oliveira at DHLAB, EPFL.

Data preparation:

- GeoVITe: Automatic data crawling / Manually data accessing

- Swisstopo: black-and-white images -> difficult to annotate with low resolution

Labeling:

60 patches (1000x1000 pixels) using GIMP for model testing:

- Original patches with spatial information: tiff

- Patches for training: jpeg

- labels in black and white: png

OpenCV:

OpenCV is used for skeletonization to reduce foreground regions in the binary image that largely preserves the extent and connectivity of the original region while throwing away most of the original foreground pixels. It facilitates quick and accurate image processing on the light skeleton instead of an otherwise large and memory-intensive operation on the original image. typically 1 pixel two basic morphological operations: dilation and erosion First, it starts off with an empty skeleton. Then, the opening of the original image is computed. After that, the opening is subtracted from the original image. Afterward, the union of the current skeleton and temp are computed to erode the original image and refine the skeleton. Finally, Repeat the steps above until the original image is completely eroded.

GDAL:

It's used for processing raster and vector geospatial data. As a library, it presents a single raster abstract data model and single vector abstract data model to the calling application for all supported formats. It's mainly for vectorization in our project.

QGIS:

QGIS is an open-source Geographic Information System. It's used for georeferencing in our project. We used ground control points ( four corners' coordinates) for georeferencing.

Limitation

The main limitation of our project is due to the data source platform. GeoVITE only allows small patches downloading, while automatic downloading from other sources leads to unsatisfying low-quality images. Thus, we only focused on the selected region in Switzerland.

Results

|