Reconstruction of Partial Facades: Difference between revisions

| Line 357: | Line 357: | ||

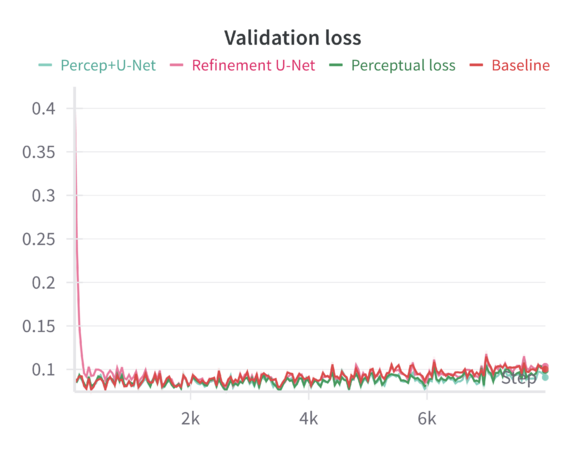

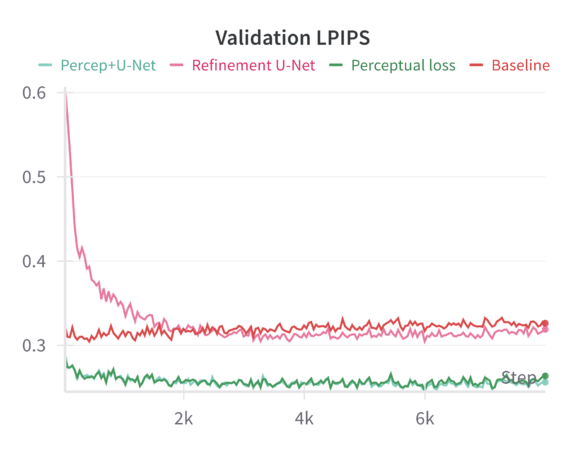

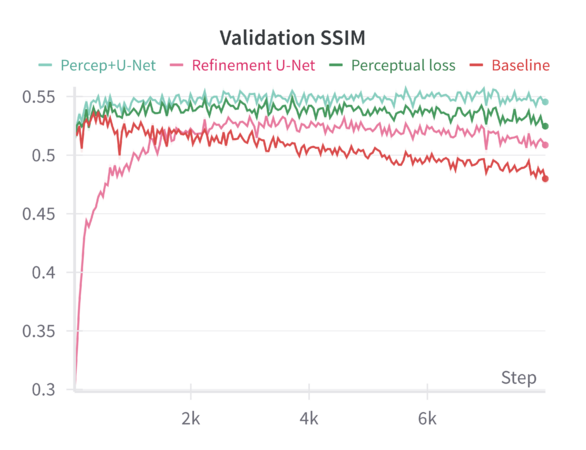

When tested on the filtered dataset of complete facades, the model—finetuned with random masking—generates reconstructions that are coherent but often lack sharp architectural details. Despite training for 200 epochs, the results remain somewhat blurry, and finer architectural features are not faithfully preserved. While random masking helps the model learn global structure, it does not explicitly guide it to emphasize fine details. The model, especially after resizing and losing some aspect ratio information, may rely heavily on learned general features rather than focusing on domain-specific architectural elements. Simply increasing training epochs without additional loss functions or refinement steps does not guarantee sharper reconstructions. The reported '''MSE loss''' on the validation set is 0.1, while other metrics such were: '''LPIPS = 0.326''' and '''SSIM = 0.479'''. | When tested on the filtered dataset of complete facades, the model—finetuned with random masking—generates reconstructions that are coherent but often lack sharp architectural details. Despite training for 200 epochs, the results remain somewhat blurry, and finer architectural features are not faithfully preserved. While random masking helps the model learn global structure, it does not explicitly guide it to emphasize fine details. The model, especially after resizing and losing some aspect ratio information, may rely heavily on learned general features rather than focusing on domain-specific architectural elements. Simply increasing training epochs without additional loss functions or refinement steps does not guarantee sharper reconstructions. The reported '''MSE loss''' on the validation set is 0.1, while other metrics such were: '''LPIPS = 0.326''' and '''SSIM = 0.479'''. | ||

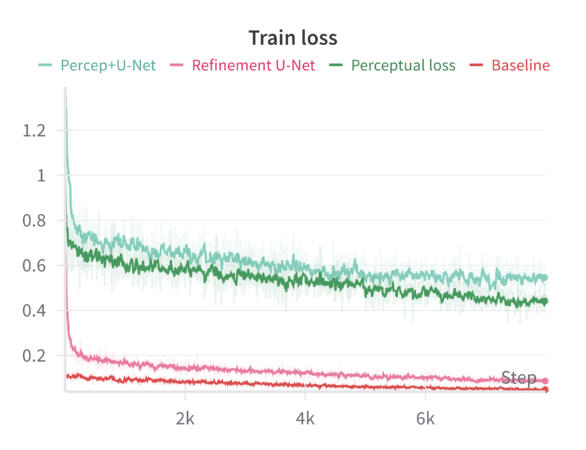

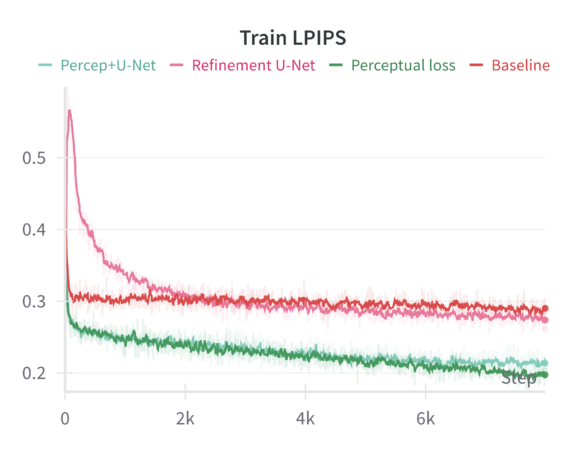

We can compare these baseline results across the different sharpening methods in the following graphs: | |||

<gallery mode="packed" widths="300px" heights="300px" style="margin: 10px;"> | |||

File:train_loss_comp.png|Train loss (base MSE) | |||

File:train_lpips_comp.png|Train LPIPS | |||

File:train_ssim_comp.png|Train SSIM | |||

File:val_loss_comp.png|Validation loss (MSE) | |||

File:val_lpips_comp.png|Validation LPIPS | |||

File:val_ssim_comp.png|Validation SSIM | |||

</gallery> | |||

---- | ---- | ||

Revision as of 19:09, 18 December 2024

Introduction

Motivation

The reconstruction of Venetian building facades is an interdisciplinary challenge, combining computer science, computer vision, the humanities, and architectural studies. Machine Learning (ML) and Deep Learning (DL) techniques offer a powerful solution to fill gaps in 2D facade data for historical preservation and visualization.

Facades vary significantly in structure, size, and completeness, making classical interpolation and rule-based methods inefficient. The Masked Autoencoder (MAE), a Transformer-based model, excels at learning patterns from large datasets and efficiently reconstructing missing regions. With its high masking ratio of 0.75, the MAE can learn robust representations while reconstructing large portions of missing data, making it seem ideal for processing the thousands of facades available to us in our dataset. The MAE captures both high-level structures (e.g., windows, arches) and fine details (e.g., textures, edges) by learning hierarchical features. Thus, it appears ideal for addressing the challenge of maintaining the architectural integrity of reconstructions, preserving stylistic elements crucial for historical analysis.

Venetian facades are valuable artifacts of cultural heritage. The MAE's ability to reconstruct deteriorated or incomplete structures supports digital preservation, enabling scholars and the public to analyze and visualize architectural history. By automating the reconstruction process, the MAE ensures scalable and accurate preservation of these historical assets. The Masked Autoencoder’s adaptability, scalability, and masking ratio of 0.75 make it uniquely suited to this reconstruction project. It efficiently handles large datasets, captures architectural details, and supports the digital preservation of Venice's rich cultural heritage.

Deliverables

link to the Github respository :

Project Timeline & Milestones

| Timeframe | Goals | Tasks |

|---|---|---|

| Week 4 |

|

|

| Week 5 |

|

|

| Week 6 |

|

|

| Week 7 |

|

|

| Week 8 |

|

|

| Week 9 |

|

|

| Week 10 |

|

|

| Week 11 |

|

|

| Week 12 |

|

|

| Week 13 |

|

|

| Week 14 |

|

|

Dataset

Description

The dataset comprises 14,148 building facades extracted from a GeoPandas file. Each facade is represented not by full raw images, but by compressed NumPy arrays containing pointcloud coordinates and corresponding RGB color values. These arrays are discretized into 0.2×0.2 bins, ensuring all images share a uniform “resolution” in terms of bin size. Although different facades vary substantially in physical dimensions, the binning ensures computational uniformity.

Statistical Analysis of Facade Dimensions:

- Mean dimensions: (78.10, 94.78)

- Median dimensions: (78, 79)

- 10th Percentile: (54, 35)

- 90th Percentile: (102, 172)

- 95th Percentile: (110, 214)

While the largest facades remain manageable within typical image model input sizes (e.g., 110×214), the wide variation in size presents a challenge for standard machine learning models, which generally require fixed input dimensions.

Preprocessing strategies

One initial idea was to preserve each facade’s aspect ratio and pad images to standard dimensions. However, padding introduces non-informative regions (often represented as black pixels) that can distort training. If such padding is considered “informative,” the model may learn to reconstruct black areas instead of meaningful details. Ignoring these regions in the loss function similarly leads to losing valuable detail along facade edges. This ultimately prompted the decision to simply resize each image to 224×224 pixels, which aligns with the pretrained MAE model’s requirements. Since all facades are smaller than or approximately equal to the target size, this resizing generally involves upsampling. Nearest-neighbor interpolation is used to preserve color values faithfully without introducing interpolated data that could confuse the reconstruction process.

Exploratory Data Analysis

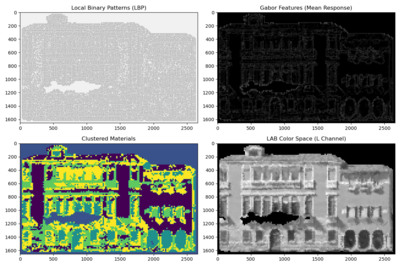

To gain deeper insights into the architectural and typological properties of Venetian facades, we conducted a series of exploratory textural and color analyses, including Local Binary Patterns (LBP), Histogram of Oriented Gradients (HOG), and Gabor filters. These will potentially provide supportive evidence for model selection, hyperparameters tuning, and error analysis.

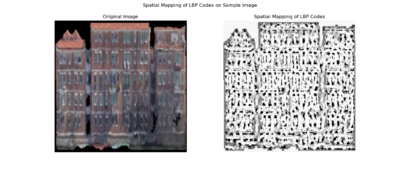

Local Binary Pattern

Local Binary Pattern (LBP) encodes how the intensity of each pixel relates to its neighbors, effectively capturing small-scale variations in brightness patterns across the surface. For the facade, this means LBP highlights areas where texture changes—such as the edges around windows, decorative elements, or shifts in building materials—are more pronounced. As a result, LBP maps reveal where the facade’s texture is smooth, where it becomes more intricate, and how these features repeat or vary across different sections of the building.

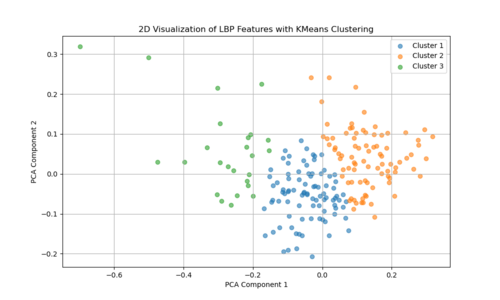

The two-dimensional projection of LBP features via PCA suggests that the textural characteristics of Venetian facades span a broad and continuous range, rather than forming a few discrete, well-defined clusters. Each point represents the LBP-derived texture pattern of a given image region or facade sample, and their spread across the plot indicates variation in texture complexity, detailing, and material transitions. If there were strong, distinct groupings in this PCA space, it would imply that certain facade types or architectural features share very similar texture signatures. Instead, the relatively diffuse distribution implies that Venetian facades exhibit a wide spectrum of subtle texture variations, with overlapping ranges of structural and decorative elements rather than neatly separable categories.

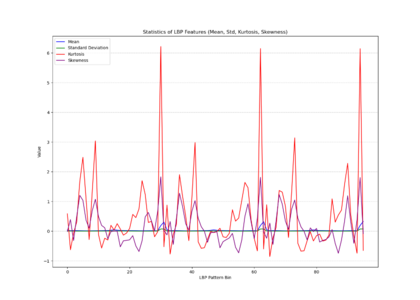

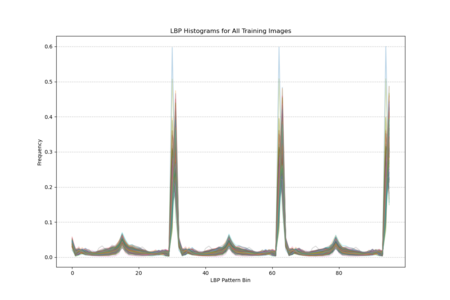

The histogram plot, displaying LBP distributions for all training images, shows pronounced peaks at certain pattern bins rather than a uniform or random spread, indicating that specific local texture patterns are consistently prevalent across the facades. The statistical plot (mean, standard deviation, kurtosis, skewness) further reveals that these patterns are not normally distributed; some bins have notably high kurtosis and skewness, indicating that certain textures appear more frequently and in a more clustered manner than others. In other words, Venetian facades are characterized by stable, repetitive textural signatures—likely reflecting repeated architectural elements and material arrangements—rather than exhibiting uniformly varied surface textures.

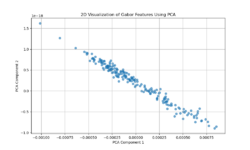

Gabor Filter

Gabor filters capture localized frequency and orientation components of an image’s texture. The PCA projection resulting in a near-linear distribution of points suggests that variation in the Gabor feature space is largely dominated by a single principal direction or a narrow set of related factors. This could imply that Venetian facades have a relatively uniform textural pattern, strongly influenced by a consistent orientation or repetitive decorative elements. In other words, the facades’ texture patterns may be comparatively regular and structured, leading to a low-dimensional representation where one main factor (like a dominant orientation or repetitive structural motif) explains most of the variation.

Histogram of Oriented Gradients (HOG)

Histogram of Oriented Gradients (HOG) features capture edge directions and the distribution of local gradients. The more scattered PCA plot indicates that no single dimension dominates the variability as strongly as in the Gabor case. Instead, Venetian facades exhibit a richer diversity of edge and shape information — windows, balconies, ornaments, and varying architectural details produce a more heterogeneous distribution of gradient patterns. This complexity results in a PCA space without a clear linear trend, reflecting more complexity and variety in structural features and contour arrangements.

In Summary: Gabor Feature suggest a more uniform, repetitive texture characteristic of Venetian facades, possibly reflecting dominant architectural rhythms or orientation patterns. HOG Features highlight a more diverse set of edge and shape variations, indicating that while texture may be consistent, the facades have numerous structural details and differing configurations that result in a more dispersed feature representation. Together, these indicate that Venetian facades are simultaneously texturally coherent yet architecturally varied in their structural details.

I have this code in my wiki but the images are overalping in the next section , how to make sure this doesnt happen

Methodology

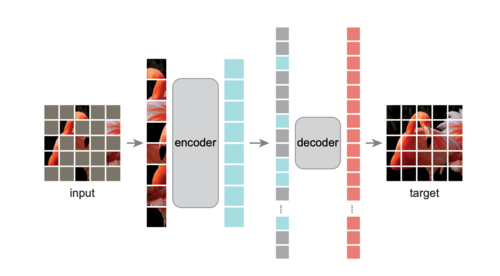

This project is inspired by the paper "Masked Autoencoders Are Scalable Vision Learners" by He et al., from Facebook AI Research (FAIR). The Masked Autoencoder splits an image into non-overlapping patches and masks a significant portion (40% to 80%) of them. The remaining visible patches are passed through an encoder, which generates latent representations. A lightweight decoder then reconstructs the entire image, including the masked regions, using these latent features and position embeddings of the masked patches.

The model is trained using a reconstruction loss (e.g., Mean Squared Error) computed only on the masked patches. This ensures the model learns to recover unseen content by leveraging contextual information from the visible patches. The simplicity and efficiency of this approach make MAE highly scalable and effective for pretraining Vision Transformers (ViTs) on large datasets.

By masking a substantial part of the image, MAEs force the model to capture both global structure and local details, enabling it to learn rich, generalizable visual representations.

For this project, two types of MAEs were implemented:

1) Custom MAE: Trained from scratch, allowing flexibility in input size, masking strategies, and hyperparameters.

2) Pretrained MAE: Leveraged a pretrained MAE, which was finetuned for our specific task.

Custom MAE

Data Preprocessing

Images were resized to a fixed resolution of 224x224 and normalized to have pixel values in the range [-1, 1]. The input images were divided into patches of size 16x16, resulting in a grid of 14x14 patches for each image. To improve model generalization, data augmentation techniques were applied during training, including random horizontal flips, slight random rotations, and color jittering (brightness, contrast, and saturation adjustments). These augmentations helped to introduce variability into the small dataset and mitigate overfitting.

We experimented with several image sizes and patch sizes to optimize the model performance. However, we ultimately adopted the same image size (224x224) and patch size (16x16) as the pretrained MAE to facilitate a direct comparison of results.

Model Architecture

The Masked Autoencoder (MAE) consists of an Encoder and a Decoder, designed to reconstruct masked regions of input images.

Encoder

The encoder processes visible patches of the image and outputs a latent representation. It is based on a Vision Transformer (ViT) with the following design:

- 12 Transformer layers

- Each layer includes Multi-Head Self-Attention (MHSA) with 4 attention heads and a Feed-Forward Network (FFN) with a hidden dimension of 1024.

- Patch embeddings have a dimension of 256.

- Positional embeddings are added to retain spatial information for each patch.

- A CLS token is included to aggregate global information.

The encoder outputs the latent representation of visible patches, which is passed to the decoder.

Decoder The decoder reconstructs the image by processing both the encoded representations of visible patches and learnable masked tokens. It includes:

- 6 Transformer layers

- Each layer has Multi-Head Self-Attention (MHSA) with 4 attention heads and a Feed-Forward Network (FFN) with a hidden dimension of 1024.

- A linear projection head maps the decoder output back to pixel values.

- The decoder reconstructs the masked patches and outputs the image in its original resolution.

Image Representation

- The input image is divided into patches of size 16x16, creating a grid of 14x14 patches for an image size of 224x224.

- Mask tokens replace the masked regions, and the decoder predicts their pixel values to reconstruct the full image.

By combining this Transformer-based encoder-decoder structure with the masking strategy and positional embeddings, the model effectively learns to reconstruct missing regions in the input images.

Masking Strategy

A contiguous block of patches is masked to simulate occlusion, which more accurately represents the incomplete facades in our data compared to a random masking strategy. A masking ratio of 50% was applied, meaning half of the patches in each image were masked during training.

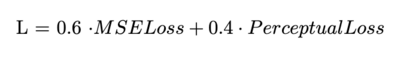

Loss Function

To optimize the model, I used a combination of Masked MSE Loss and Perceptual Loss. The Masked MSE Loss, following the original MAE methodology, is computed only on the masked patches to encourage reconstruction of unseen regions. The Perceptual Loss, derived from a pre-trained VGG19 network, enhances reconstruction quality by focusing on perceptual similarity, also restricted to masked regions, the final loss is a weighted combination.

Training and Optimization

The model was trained using the AdamW optimizer, with a learning rate scaled based on batch size and a cosine decay scheduler for gradual reduction of learning rates. A warm-up phase was incorporated to stabilize training during the initial epochs.

Evaluation Metrics

Performance was evaluated based on reconstruction quality (MSE + perceptual loss) and visual fidelity of reconstructed images.

Pre-trained MAE

The chosen model is a large MAE-ViT architecture pretrained on ImageNet. This model is configured as follows:

def mae_vit_large_patch16_dec512d8b(**kwargs):

model = MaskedAutoencoderViT(

patch_size=16, embed_dim=1024, depth=24, num_heads=16,

decoder_embed_dim=512, decoder_depth=12, decoder_num_heads=16,

mlp_ratio=4, norm_layer=partial(nn.LayerNorm, eps=1e-6), **kwargs)

return model

This configuration reflects a ViT-Large backbone with a 16×16 patch size, a high embedding dimension (1024), and a substantial encoder/decoder depth. The original MAE is designed with random patch masking in mind (i.e., a masking ratio of 0.75). This backbone was pretrained on ImageNet and, in the approach described here, is further adapted for facade reconstruction by finetuning certain components.

Freezing the Encoder and Extending the Decoder:

The encoder parameters are frozen, as they contain general pre-learned features from ImageNet. The decoder is then reintroduced and extended by adding more layers to better capture the architectural details of facades. Increasing the decoder depth provides a larger capacity for complex feature transformations and more nuanced reconstructions. A deeper decoder can better capture subtle architectural details and textures that are characteristic of building facades. Thus, these improvements can be attributed to the increased representational power and flexibility of a deeper decoding network.

The model, pretrained on ImageNet, “knows” a wide range of low-level and mid-level features (e.g., edges, textures) common in natural images. It can leverage these features for initial facade reconstruction tasks. The pretrained weights provide a strong initialization, speeding up convergence and improving stability. The model can quickly learn general color and shape distributions relevant to images. The ImageNet backbone does not specialize in architectural patterns. Without fine-tuning, it may overlook domain-specific features (e.g., window shapes, door frames). Thus, while it provides a broad visual language, it lacks a priori understanding of facade-specific semantics and must learn these details through finetuning.

Masking and sharpening strategies

Random masking:

The MAE model uses random masking, where patches are randomly removed and the network learns to reconstruct them. This ensures that the model evenly learns features across the entire image, promoting a more generalized understanding of the scene. Random masking encourages the model to be robust and to learn global structures. By forcing the network to infer missing content from sparse visual cues distributed throughout the image, it ensures that no single area dominates training. This results in the model being better at reconstructing a wide range of features, as opposed to overfitting to a particular region or pattern.

To improve reconstruction sharpness and detail fidelity, additional techniques were explored:

Perceptual Loss via VGG:

Perceptual loss functions compare high-level feature representations of the output and target images as extracted by a pretrained VGG network. By emphasizing the similarity of feature maps rather than raw pixel differences, the model is encouraged to produce more visually pleasing and structurally coherent reconstructions. This can help maintain repetitive features and stylistic elements characteristic of facades. The main goal is to enhance the quality and realism of the reconstructed images. Perceptual loss promotes the retention of global structural patterns and textures, making the result less blurry and more visually appealing, which is crucial for architectural details.

Refinement U-Net:

A refinement U-Net is introduced as a post-processing network to improve fine details. This network can:

- Denoise outputs.

- Reduce artifacts introduced by the initial reconstruction.

- Enhance edges and textures.

- Correct color inconsistencies.

This step involves passing the MAE output through a lightweight model that is trained to produce a cleaner, sharper final image.

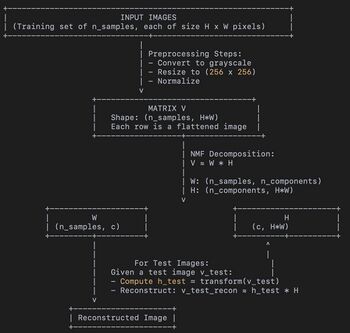

Non-negative Matrix Factorization

Non-Negative Matrix Factorization (NMF) has emerged as a powerful tool for dimensionality reduction and feature extraction, particularly suited to applications where data are inherently non-negative. In image processing tasks, NMF can be applied to reconstruct missing regions in incomplete images by decomposing an image into a linear combination of non-negative basis images and corresponding non-negative coefficients.

The incentive to use Nonnegative Matrix Factorization (NMF) for facade image reconstruction is based on several key points:

- NMF provides a relatively simple and easily adjustable model structure.

- NMF can handle outliers and noise more effectively than some complex models.

- The loss function in NMF is fully customizable, which allows for future incorporation of semantic or textural-level loss instead of relying purely on pixel-level errors.

- Compared to methods like Masked Autoencoders (MAE), NMF has significantly fewer hyperparameters, making the model more interpretable. This interpretability is particularly valuable in contexts such as cultural heritage preservation, where clarity in the model’s behavior is crucial.

NMF decomposes a dataset, represented as a large matrix, into two smaller matrices that contain only nonnegative values. The first matrix represents the building blocks or components of the data, while the second matrix specifies how to combine these components to approximate the original data. The number of components is determined by the level of detail required and is chosen based on the specific application. Each component typically captures localized patterns or features, such as windows, edges, or balconies, that are commonly found in facade structures.

The decomposition process aims to minimize the difference between the original data and its approximation based on the two smaller matrices. By ensuring that all values in the matrices are nonnegative, NMF tends to produce parts-based representations. These representations make it easier to interpret which features contribute to the reconstruction of specific areas in the data.

- Input Data (V):

- Converted to grayscale

- Resized to a fixed size (256 x 256)

- Flattened into a single vector.

- Stacking all these flattened vectors forms a data matrix V with shape (n_samples, img_height * img_width). Here, img_height * img_width = 256 * 256 = 65536 pixels per image. Thus, if we have n_samples images, V is (n_samples x 65536).

- NMF Decomposition (W and H):

- The NMF model factorizes V into two matrices: W and H.

- W (of shape (n_samples, n_components)) shows how each of the n_components is combined to construct each of the n_samples images.

- H (of shape (n_components, img_height * img_width)) encodes the “basis images” or components. Each of the n_components can be thought of as a part or a pattern that, when linearly combined (with non-negative weights), reconstructs the original images.

- We chose n_components = 60. That means the model tries to explain each image as a combination of 60 “parts” or “features” (stored in rows of H).

- Model Structure:

- It’s not a layered neural network - there are no traditional “layers” or “weights” in the neural network sense, just these two matrices. The optimization finds non-negative entries in W and H that best approximate V.

- Test Phase (Reconstruction):

- Loads and preprocesses them similarly.

- Uses the trained NMF model to get the coefficient matrix H_test (via model.transform) and then reconstructs the images as V_test_reconstructed = H_test × H.

- The reconstructed images can be combined with the original partial information to generate a final completed image.

While NMF-based reconstruction does not guarantee perfect results, particularly when large parts of the image are missing or the training data is not diverse enough, it often provides semantically meaningful completions. These results are generally better than those achieved through simple pixel-based interpolation. Further improvements could be achieved by integrating complementary techniques, such as texture synthesis or advanced regularization, or by incorporating prior knowledge about architectural structures.

Hyperparameter Choice of NMF

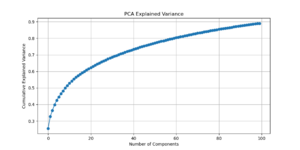

- Dimensionality Reduction Insights:

- PCA Pre-analysis: Before running NMF, apply Principal Component Analysis (PCA) to the training and test images. Analyze the explained variance of PCA components to estimate the intrinsic dimensionality of the data.

- Reconstruction Saturation: Determine a range of PCA components at which reconstruction quality stops improving significantly. This provides a strong initial guess for the number of components (n_components) in NMF.

- PCA Pre-analysis: Before running NMF, apply Principal Component Analysis (PCA) to the training and test images. Analyze the explained variance of PCA components to estimate the intrinsic dimensionality of the data.

- Hyperparameter Decisions for NMF:

- Number of Components (n_components): Choose a value informed by PCA and by evaluating the reconstruction performance. Strive to capture most of the variance without overfitting. (See figure of PCA explained variance)

- Initialization (init): Use nndsvda (Nonnegative Double Singular Value Decomposition with zeroing) for faster convergence and better initial component estimates.

- Regularization: Consider adding L1 or L2 regularization (via l1_ratio) to promote sparsity or smoothness in the learned components. Decisions on regularization parameters can be guided by cross-validation or domain-specific considerations (e.g., promoting certain architectural features while discouraging noise).

- Number of Components (n_components): Choose a value informed by PCA and by evaluating the reconstruction performance. Strive to capture most of the variance without overfitting. (See figure of PCA explained variance)

Results

Custom MAE

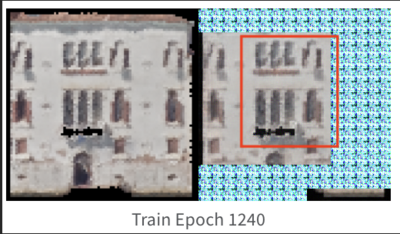

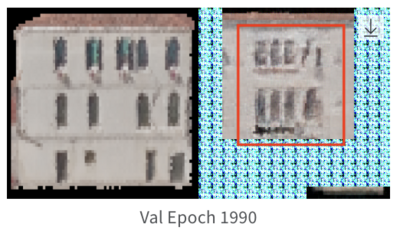

The results obtained from training our custom MAE were not entirely satisfactory, as the reconstructed images appeared quite blurry and lacked fine-grained details, having difficulty recovering features like windows or edges. The original motivation for training the model from scratch was to have greater flexibility in the model architecture. By building a custom MAE, we aimed to tailor the model's design to the specific challenges of our dataset, such as the unique structure of incomplete facades and the need to experiment with different parameters like masking strategies, patch sizes, and embedding dimensions. This level of customization allowed us to explore architectural decisions that might better align with the characteristics of our data, compared to relying on a pretrained model with fixed design choices but a major limitation in this setup was the size of the dataset, which contained only 650 images of complete facades. Training a deep learning model like an MAE, especially from scratch, requires a much larger dataset to effectively learn meaningful representations. With such a small dataset, the model struggled to generalize, focusing on coarse, low-frequency features such as the overall structure and color distribution, rather than capturing finer details like edges, textures, and patterns.

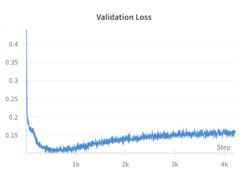

While the perceptual loss based on VGG19 features did enhance the reconstruction quality, its impact was limited by the small size of the training dataset. The model successfully began to recover higher-level patterns and global structures, such as windows and doors, but struggled to capture fine-grained details due to insufficient training data. During training, the validation loss decreased until approximately epoch 300, after which it began to increase, signaling overfitting. Interestingly, despite the rising loss, the visual quality of the reconstructed images continued to improve up to around epoch 700. This suggests that the model was learning to replicate patterns, such as windows and doors, observed in the training set and applying these learned structures to the facades in the validation set, resulting in more realistic-looking reconstructions.

Even though those results seem promising the model demonstrates a notable tendency to reproduce patterns from the training set onto validation facades that share visual similarities. The model memorizes certain features, such as the shape and position of windows or architectural elements, rather than learning generalized representations. In the images below, we observe that parts of the validation reconstructions resemble features seen in the training facades.

This suggests that the model is copying "learned patterns" rather than fully reconstructing unseen details, highlighting the limitations of training with a small dataset.

To overcome these limitations, we opted to use a pretrained model instead of training from scratch. The pretrained model, fine-tuned on our specific dataset, leveraged learned representations from large-scale training on diverse data, allowing it to generalize much better. By building on the rich, low- and high-level features already embedded in the pretrained network, the fine-tuned model produced significantly sharper and more realistic reconstructions. In the next section, we will present the results obtained using this pretrained model, highlighting its improved performance compared to the custom MAE.

Pre-trained MAE

Random masking

When tested on the filtered dataset of complete facades, the model—finetuned with random masking—generates reconstructions that are coherent but often lack sharp architectural details. Despite training for 200 epochs, the results remain somewhat blurry, and finer architectural features are not faithfully preserved. While random masking helps the model learn global structure, it does not explicitly guide it to emphasize fine details. The model, especially after resizing and losing some aspect ratio information, may rely heavily on learned general features rather than focusing on domain-specific architectural elements. Simply increasing training epochs without additional loss functions or refinement steps does not guarantee sharper reconstructions. The reported MSE loss on the validation set is 0.1, while other metrics such were: LPIPS = 0.326 and SSIM = 0.479.

We can compare these baseline results across the different sharpening methods in the following graphs:

NMF

The reconstructed facades, as illustrated by the provided examples, exhibit several noteworthy characteristics:

- Structural Consistency: The NMF-based approach recovers the general shape and broad architectural features of the buildings depicted. For example, window placements, building outlines, and large-scale contrasts are reasonably reconstructed. This demonstrates that the NMF basis images effectively capture dominant spatial patterns and that these patterns can be recombined to fill missing areas.

- Smoothness of Reconstruction: Due to the nature of NMF’s additive combinations, reconstructions tend to be smooth and free from negative artifacts. This results in visually coherent fills rather than abrupt transitions or unnatural patterns.

- Spatial Averaging Effects: While the global structure is often well approximated, finer details, such as small textural features or subtle contrast differences, are sometimes lost. NMF often produces reconstructions that appear slightly blurred or smoothed, especially in regions with fine-grained details.

- Sensitivity to Initialization and Rank Selection: NMF’s performance is sensitive to the choice of the latent dimensionality (i.e., the rank) and initialization. A suboptimal rank may lead to oversimplified basis images that cannot capture finer nuances, whereas too high a rank could lead to overfitting and less stable reconstructions.

- Limited Contextual Reasoning: Since NMF relies purely on the statistical structure within the image data and does not inherently model higher-level semantics, certain patterns, especially those not well-represented in the known portions of the image, are not reconstructed convincingly. Complex structural features or patterns unique to missing regions may be poorly recovered without additional constraints or priors.

Error Analysis

Linking Feature Representations to MAE Reconstruction

Gabor Features:

These features highlight repetitive, uniform texture patterns. Venetian facades often have recurring motifs—brick patterns, stone arrangements, or consistent color gradients. The Gabor analysis showed that the facades, in terms of texture frequency and orientation, vary along a mostly one-dimensional axis, suggesting a strong commonality.

Implication for MAE:

Since MAEs learn to fill in missing patches by leveraging global context, regular and repetitive textures are easier to guess. If part of a patterned surface (like a uniform wall area) is masked, the MAE can infer what belongs there from the context it has learned. As a result, reconstruction errors on these uniform, texturally consistent regions are likely to be low.

- HOG Features:

- HOG captures edge distributions, corners, and the shapes formed by architectural details—think windows, balconies, ornate moldings, and decorative columns. The PCA results for HOG were more scattered, indicating that Venetian facades do not have a single dominant “type” of edge pattern, but rather a wide variety of distinct and intricate details.

- Implication for MAE:

- Irregular, unique details are harder for the MAE to predict if they’re masked. Unlike repetitive textures, a unique balcony shape or an uncommon decorative element can’t be inferred as easily from nearby patches. The MAE may reconstruct something plausible in broad strokes but miss subtle intricacies, leading to higher error in these regions.

Structural Regularity and Symmetry

LBP analysis reveals pervasive repeating patterns and structural symmetry, providing a reference for understanding Masked Autoencoder (MAE) reconstructions. The MAE excels at reproducing these large-scale patterns—such as window alignments or arch sequences—aligning closely with LBP findings that emphasize overarching geometric coherence.

Texture Simplification

LBP indicates where surfaces transition from smooth to intricate textures. While these findings highlight the presence of finely detailed regions, the MAE reconstructions tend to simplify such areas. The loss of subtle textures arises from the MAE’s focus on recovering global structure rather than capturing every local nuance—an inherent limitation that LBP helps explain.

Smooth vs. High-Detail Areas

LBP distinctions between smooth and heavily ornamented areas correspond directly to MAE outcomes. Smooth surfaces, easily modeled due to their low variance, are faithfully reconstructed. In contrast, complex textures appear blurred, reflecting the MAE’s challenge in fully restoring the fine-scale intricacies that LBP so clearly delineates.

Hierarchical Architectural Features

LBP’s highlight of hierarchical architectural arrangements explains how the MAE manages certain architectural features well (e.g., aligning windows and maintaining facade outlines) while struggling with finer ornamental elements. This hierarchical perspective helps us understand why global forms are preserved, whereas delicate details fade.

Future Direction

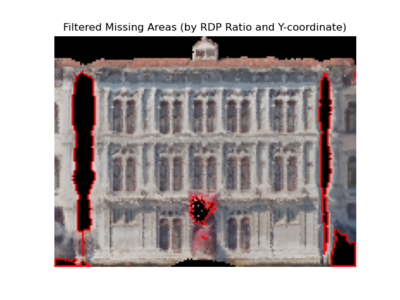

1) inference of MAE 2) dynamic masking that more precisely capture the areas that needs to be inpainted (figure right) 3) semantic consideration (reference paper and figure)

Conclusion

Appendix

References

He, K., Chen, X., Xie, S., Li, Y., Dollár, P., & Girshick, R. (2022). Masked Autoencoders Are Scalable Vision Learners. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 16000–16009. https://openaccess.thecvf.com/content/CVPR2022/papers/He_Masked_Autoencoders_Are_Scalable_Vision_Learners_CVPR_2022_paper.pdf