Terzani online museum: Difference between revisions

Maxime.jan (talk | contribs) |

Maxime.jan (talk | contribs) |

||

| Line 64: | Line 64: | ||

* Logo detection: Detects any (famous) product logos within an image along with a bounding box. | * Logo detection: Detects any (famous) product logos within an image along with a bounding box. | ||

For each IIIF annotation, we first read the image data into byte format and then use Google Vision API to get the additional annotations. However, some of the information returned by API cannot be used as it is. We processed bounding boxes and all texts the following way : | For each IIIF annotation, we first read the image data into byte format and then use Google Vision's API to get the additional annotations. However, some of the information returned by the API cannot be used as it is. We processed bounding boxes and all texts the following way : | ||

* Bounding boxes: To be able to | * Bounding boxes: To be able to crop the photos around bounding boxes with the IIIF format, we need its top-left corner coordinates as well as its width and height. For the OCR text, logo, and landmark detection, the coordinates of the bounding box are relative to the image, and thus we can use them directly. | ||

** As for object localization, the API normalizes the bounding box coordinates between 0 and 1. The width and height of the photo is present in its IIIF annotation, which allows us to "de-normalize" the coordinates. | ** As for object localization, the API normalizes the bounding box coordinates between 0 and 1. The width and height of the photo is present in its IIIF annotation, which allows us to "de-normalize" the coordinates. | ||

Revision as of 23:22, 13 December 2020

Introduction

The Terzani online museum is a student course project created in the context of the DH-405 course. From the archive of digitized photos taken by the journalist and writer Tiziano Terzani, we created a semi-generic method to transform IIIF manifests of photographs into a web application. Through this platform, the users can easily navigate the photos based on their location, filter them based on their content, or look for similar images to the one they upload. Moreover, the colorization of greyscale photos is also possible.

The Web application is available following this link.

Motivation

Many inventions in human history have set the course for the future, specifically those that helped people passing on their knowledge. Storytelling is an essential part of the human journey. From family pictures to the exploration of deep space, stories form connections. Historically, tales were mostly transmitted orally. This tradition slowly gave way to writing, which also kept evolving. The different methods of transmission have each influenced their times and the way historians perceived it. In that context, the 19th century film-camera transformed how stories were shared. For the first time in history, scenes could be accurately and instantanously captured. Moreover, this invention was soon accessible by a large public as its production cost lessened. As such, an abundance of photographs were taken throughout the 20th century. However, today, the vast majority of them are lying in drawers or in archives with the risk of being damaged or destroyed. One way to preserve this knowledge is to digitize it. This alone does not revive it nor give it the importance it deserves. Therefore, the aim of this project is to create a medium for these large collections of photos so that anyone, anywhere, can easily access them for research purposes or simply to explore a different time.

Our work specifically focuses on Tiziano Terzani, an Italian journalist and writer. During the second half of the 20th century, he has extensively traveled in East Asia[1] and has witnessed many important events. He and his team captured pictures of immense historical value. The Cini Foundation digitized some his photos[2]. However, the foundation did not organise them, rendering the navigation through the collections tedious. Thus, we created a web application facilitating the access to Terzani's photo archive.

Description of the realization

The Terzani Online Museum is a web application with multiple features allowing users to navigate through Terzani's photo collections. The different pages of the website described below are accessible on the top navigation bar of the website.

Home

The home page welcomes the users to the website. It invites them to read about Terzani or to learn about the project on the about page.

About

The about page describes the website's features to the visitors. It guides them through the usage of the gallery, landmarks, text queries, and image queries.

Gallery

The gallery allows users to quickly and easily explore photo collections of specific countries. On the website's gallery, users can find a world map centered on Asia. On top of this map, a red overlay shows the countries for which photo collections are available. By clicking on any State, the associated photos are displayed on the right side of the page. Clicking on an image opems a modal window with the full size photo - unlike the gallery where cropped versions are shown - alongside its IIIF annotation. An option to colorize the images is also available on this modal window.

The next feature on gallery is to be able to see at a glance the famous landmarks that are present in the photographs. For that, the users can click on the Show landmarks button to display markers at the locations of the landmarks. Clicking on a marker opens a small pop-up with the location name and a button allowing to show the photos of that landmark.

Search

On the Terzani Online Museum's search page, users can explore the photographs depending on what they depict. The requests can either be made by text, or by image. The search results are displayed similarly to the gallery page.

Text queries

Users are invited to write the content they are looking for in the Terzani photo collections. This content can correspond to multiple things. It can be general labels associated with the photographs, specific localized objects in the image, or it can be recognized text from the photos.

Below the text field, users can select two additional parameters to tune their queries. Only show bounding boxes restrains the results to the localized objects and crops their display around them. Search for exact word constraints the search domain to match precisely the input and thereby not displaying partial matches.

Image queries

Users can also upload an image from their device and obtain the 20 most similar pictures from all collections.

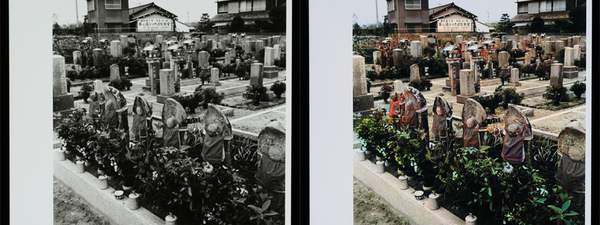

Photo colorization

To breathe life into the photo collections, we implemented a colorization feature. When users click on a photo and on the Colorize button, a new window displays the automatically colorized picture.

Note: this feature is currently disabled on the website because of the lack of GPU

Methods

Data Processing

Acquiring IIIF annotations

As the IIIF annotations of photographs form the basis of the project, the first step is to collect them. The Terzani archive is available on the Cini Foundation server[3]. However, it does not provide an API to download the IIIF manifests of the collections. Therefore, we use Python's Beautiful Soup module to read the root page of the archive[4] and to extract all collection IDs. Using the collected IDs, we obtain the corresponding IIIF manifest of the collection using urllib. We can then read these manifests and only keep the annotations of photographs whose label explicitly states that it represents its front side.

As we want to display the photos in a gallery sorted by country, we need to associate each IIIF annotation with the photo's origin. This information is available on the root page of the Terzani archive[5], as the collections' names take after their origin. As these names are written in Italian and are not all formatted the same, we manually map each photo collection to its country. In this process, we ignored the collections that have multiple country names.

Annotating the Photographs

Once in possession of all the photographs' IIIF, we annotate them using Google Cloud Vision. This tool provides a Python API with a myriad of annotation features. For the scope of this project, we decided to use the following :

- Object localization: Detects which objects are on the image with their bounding box.

- Text detection: Recognizes text on the image alongside its bounding box.

- Label detection: Provides general labels for the whole image.

- Landmark detection: If the image contains a famous landmark, provides the name of the place and its coordinates.

- Web detection: Searches if the same photo is on the web and returns its references alongside a description. We make use of this description as an additional label for the whole image.

- Logo detection: Detects any (famous) product logos within an image along with a bounding box.

For each IIIF annotation, we first read the image data into byte format and then use Google Vision's API to get the additional annotations. However, some of the information returned by the API cannot be used as it is. We processed bounding boxes and all texts the following way :

- Bounding boxes: To be able to crop the photos around bounding boxes with the IIIF format, we need its top-left corner coordinates as well as its width and height. For the OCR text, logo, and landmark detection, the coordinates of the bounding box are relative to the image, and thus we can use them directly.

- As for object localization, the API normalizes the bounding box coordinates between 0 and 1. The width and height of the photo is present in its IIIF annotation, which allows us to "de-normalize" the coordinates.

- Texts: Google API returns text in English for various detections and in other identified languages for text OCR detection. As to improve the search result, along with the original annotation returned by the API, we also add tags after performing some cleansing steps.

- Lower Case: Converts all the characters in the text to lowercase

- Tokens: Converts the strings into words using nltk word tokenizer.

- Punctuation: Removes all word punctuation.

- Stem: Converts the words into their stem form using the porter stemmer from nltk.

We then store the annotations and bounding box information together in JSON format.

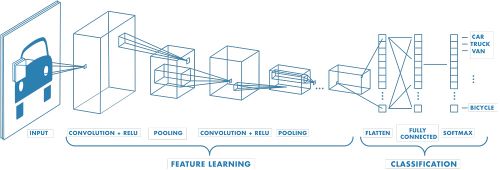

Photo feature vector

The feature vector of a photograph finds its use in the search for similar images. For each photo in the collection, we generate a 512-dimensional vector using Resnet to represent the image. The feature vector, which is the output of the Convolutional Neural Network, is a numeric representation of the photo. Recently, there has been a plethora of success in training the deep neural networks that perform tasks such as classification and localization with near-human cognition. The hidden layers in these networks learn the intermediate representations of the image, and thus they can serve as a representation of the image itself. Hence for this project, we used a trained Resnet 18 to generate the feature vectors of the photo collections. We chose Resnet because of the relatively small feature size. We take the the feature vector as the output of the average pooling layer, where the feature learning part ends. Similar to the annotations, a JSON document stores the vectors.

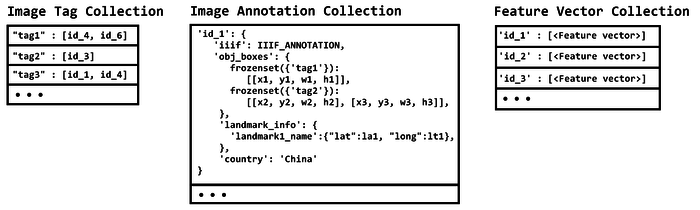

Database

As the data is primarily unstructured owing to the non-definitive number of tags, annotations, and bounding boxes an image can have, we use a NoSQL database and choose MongoDB due to its representation of data as documents. Using PyMongo, we created three different collections in this database.

- Image Annotations: This is the main collection. Each object has a unique ID and contains a IIIF annotation alongside Google Vision's additional annotations that have a bounding box (object localization, landmark, and OCR).

- Image Feature Vectors: This collection contains the mapping between the object ID and its corresponding feature vector.

- Image Tags: This is a meta collection on top of the Image Annotations to help process the text search queries faster by searching the labels and returning the related image labels. It contains one object for each annotation, bounded object, landmark, and text detected by Google Vision, and they store a list of IDs of photos corresponding to them.

Website

Back-end technologies

In order to create a Web Server that could easily handle the data in the same format we had used so far, it felt natural to choose a Python Framework. As the web application we wanted to build did not require complex features (e.g. authentication) we chose to use Flask which provides the essential tools to build a web server.

The server primarily processes the users' queries. Along with making a bridge between the client and the database, it also colorizes the photos that the users require.

Front-end technologies

To build our webpages, we use the traditional HTML5 and CSS3. To make the website responsive on all kinds of devices and of screen sizes, we use Twitter's CSS framework Bootstrap. The client-side programming uses JavaScript with the help of the JQuery library. Finally, for easy usage of data coming from the server, we use the Jinja2 templating language.

Gallery by country

To create the interactive map, we used the open-source JavaScript library Leaftlet. To put in evidence the countries that Terzani visited, we used the feature that allows us to display GeoJSON on top of the map. We used GeoJSON maps to construct such a file that contains the desired countries only.

When the user clicks on a country, the client makes a request to the server. In turn, the server queries the database to get the IIIF annotations of pictures matching the requested country. When the client gets this information back, it uses the image links from the IIIF annotations to display them to the user. The total number of results for a given country serves to compute the number of pages required to display all of them, while each page contains 21 images. To create the pagination, we use HTML <a> tags, which, on click, make a request to the server asking for the relevant IIIF annotations

Map of landmarks

When the user clicks on the Show landmarks button, a request is made to the server asking for the name and geolocalisation of all landmarks in the database. With this information, we can create with the Leaflet library a marker for each landmark. Additionally, Leaflet also allows creating a customized pop-up when clicking on a marker. These pop-ups contain simple HTML with a button which, on click, queries for the IIIF annotations of the corresponding landmark.

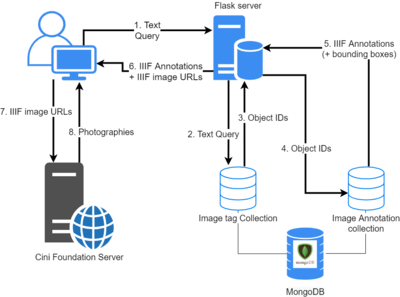

Search by Text

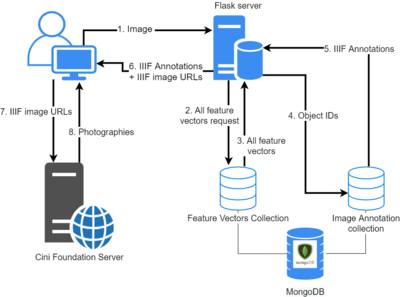

Querying photographs by text happens in multiple steps described below. The numbers correspond to the numbers on the schema on the right.

- Users enter their query in the search bar and press the Search button. The client makes a request containing the user input to the server.

- Upon receiving the user text query, the server tokenizes it into lower case words and removes any punctuation. The words also undergo stemming if the user did not indicate to search for an exact match. Then the server queries the

Image Tagcollection to retrieve the image IDs corresponding to each word. - The MongoDB database responds with the requested object IDs

- Upon receiving the object IDs, the server orders the images in the sequence of text matching score. It then queries the

Image Annotationcollection to retrieve the IIIF annotation of these objects. If the user checked theOnly show bounding boxescheckbox, the server also asks for the bounding boxes information. - The MongoDB database responds with the requested IIIF annotations and the bounding boxes if needed.

- When the server gets the IIIF annotations, it constructs the IIIF image URLs of all results so that the resulting image has the shape of a square. However, if the user requested to show the bounding boxes only, then the server creates the IIIF image URLs so that they will crop each photo around its bounding box.The client then receives this information from the server.

- Using Jinja2, the client creates an HTML

<img>tag for each Image URL and queries the data hosted on the Cini Foundation server. - The Cini Foundation server answers with the image data and they can be displayed to the users.

Search by Image

The process to query similar photographs is similar to the text queries.

- Users upload an image from their device. The client makes a request containing the data of this image to the server.

- Upon receiving this request, the server computes the feature vector of the user's image using a ResNet 18 in a similar fashion described earlier. It then queries the database for all feature vectors.

- The database answers with all feature vectors.

- When the server has all the feature vectors, it creates a similarity vector between the user uploaded image and all of the images returned by the database. The server obtains the similarity between the feature vectors using Cosine similarity. Then, it selects the top 20 images having the highest similarity and queries the

Image Annotationdatabase for the corresponding IIIF of the photos. - 6. 7. 8. The remaining steps fall in place similarly to the text search case without the bounding box requirement.

Image colorization

The tool for image colorization is called DeOldify. DeOldfiy uses a deep generative model called NoGAN to transform a black & white image into a coloured one. All details about this tool can be found on its GitHub page.

When the user clicks on the Colorise photo button, the client makes a POST request to the server with the selected image URL. In turn, the server initializes a DeOldify instance which applies its precomputed model to the selected black & white image and returns a colorised version. Before returning this image to the client, it caches it to avoid colourising the same image again.

Quality assessment

Assessing the quality of our product is rather tricky. While our project makes use of many technologies (Google Vision, DeOldify, ...), we did not train any model or modified them in any way. Thus, we will not evaluate their quality. As the Terzani Online Museum is a user-centered project, we thought it made more sense for the users, not the developers, to assess its quality. We therefore gathered feedback in the form of guided and non-guided user testing. We will still, however, provide our own critical views regarding the data processing part.

Data Processing

In an ideal scenario, we would have liked data processing to be fully generic and automated. The scraping of IIIF annotation from the Cini Foundation server however requires some manual work. Indeed, the lack of an API to easily access the IIIF manifests coerced us to parse the structure of the Terzani archive webpage. Therefore if the structure of this page were to change, the code we wrote to scrape the IIIF annotations would be invalid. Moreover, as the country of a collection is not available in the IIIF annotations, we had to manually set them from the names of the collections. The rest of the pipeline however is fully automated and generic. This is why we assess that we have developped a semi-generic method, where some manual work - scraping IIIF annotations and assigning a country to each of them - has to be performed before running the automated script.

Concerning the creation of new tags and annotations for the photographies using Google Vision API, we can generally assess that the results are sufficiently reliable and coherent. However, as the API does not provide any control over the languages for the OCR, we noticed that it very often misses detection of words written in Chinese, Japanese, Vietnamese, Hindi, etc... Results for english text however are very impressive and sometimes more precise than a human eye.

The annotation step is also very time consuming. Because the images are not stored on Google Cloud Storage, the process cannot happen asynchronously which leads to a large amount of waiting time. A further improvement of this project would be to make our own code asynchronous to accelerate the process. Moreover, we could also parallelize the computation of feature vectors to optimize the data processing even further.

Website user feedback

Text queries

The first feedback we got about text queries was that they were sometimes counter-intuitive. Indeed, we originally thought it would be too strenuous for the user to search for exact words to find a match and therefore resorted to make partial matches. This however creates unexpected results where you get photos of cathedrals when you were looking for cats, and carving photos when searching for cars. We answered that concern by adding the Search for exact words checkbox which disables partial matches.

Otherwise, the users were mostly happy with this feature and had fun making queries. The failing cases (e.g. the bounding boxes for "dog" also show photos of a pig and a monkey) were seen as more amusing than annoying. We asked the users to rate on a scale of 1 to 7 how relevant the results of their queries were (1 being irrelevant and 7 entierely relevant). The average relevancy score over all testers was approximately 6.2, which allows us to safely say that this feature is working well.

Image queries

Concerning the image queries, we had the remark that it would be more practical to display the uploaded image next to the results. We therefore decided to implement this suggested feature.

Users were mostly pleased with the results, though not as impressed as for the text queries. We also asked them to rate the relevancy of this feature's results on a scale of 1 to 7 and got an average score of 5.8. It should however be noted that this feature was tested with a subset of 1000 images from the 8500 available ones. Augmenting the number of potential results would also augment the chances of finding similar images.

While users did not complain about query time, the image queries take about 1-2 seconds to execute. This is due to the fact that the feature vector of the uploaded image has to be sequentially compared with all feature vectors from the database. As a further optimization, we could parallelize this computation to make it scale better and faster overall.

Gallery

Many testers noticed the same unusual behavior with the Show landmarks. It would be more intuitive if, once clicked, this button became Hide landmarks. The current behavior leads users to repeatedly click on the button, adding the same markers again, and resulting in the shadows of the markers growing each time. TWe should seek to correct this bug in a further version.

Code Realisation and Github Repository

The GitHub repository of the project is at Terzani Online Museum. There are two principal components of the project. The first one corresponds to the creation of a database of the images with their corresponding tags, bounding boxes of objects, landmarks and text identified, and their feature vectors. The functions related to these operations are inside the folder (package) terzani and the corresponding script in the scripts folder. The second component is the website that is in the website directory. The details of installation and usage are available on the Github repository.

Limitations/Scope for Improvement

While some limitations and improvements for this project have already been cited in the Quality Assessment, we still provide the complete list in this section.

- Due to lack of time, we are unable to change the behavior of the

Show landmarksbutton on the gallery page that keeps on adding markers. - The partial matches for the text queries could be enhanced with natural language processing to avoid getting semantically different results (e.g. cathedral when looking for cat).

- An option to search for similar photographs could be added on the modal window displaying the details of an image.

- The confidence scores of Google Vision's annotations could be used to sort the results.

- The pagination currently shows all the page numbers. It could be reduced to a number picker instead.

- The comparison of a feature vector to the whole feature vector database could be parallelized.

- The creation of the database could be made asynchronous and/or parallel.

Extension idea

An idea to bring this project further would be to couple it with Terzani's writings. As Terzani has written many books and articles about Asia, it would be interesting to try to match his photographs with his texts. This way, the users experience would be enhanced by having a textual support.

Schedule

We spent the first week setting the scope of our project. The original idea was only to colorize the photographs from the Terzani archive, but we quickly realized that there were already powerful softwares capable of doing it. Therefore, we moved the goalposts during week 5 and made the following schedule for the Terzani online museum.

☑: Completed ☒: Partially completed ☐: Did not undertake

| Timeframe | Tasks |

|---|---|

| Week 5-6 |

|

| Week 6-7 |

|

| Week 7-8 |

|

| Week 8-9 |

|

| Week 9-10 |

|

| Week 10-11 |

|

| Week 11-12 |

|

| Week 12-13 |

|

| Week 13-14 |

|