VenBioGen

Introduction

In this project, we use generative models to come up with creative biographies of Venetian people that existed before the 20th century. Our motivation was originally to observe how such a model would pick up on underlying relationships between Venetian actors in old centuries as well as their relationships with people in the rest of the world. These underlying relationships might or might not come to light in every generated biography, but we can be sure that the model has the potential to offer fresh perspectives on historical tendencies.

Motivation

This project is an interesting exploration into the intersection between Humanities and Natural Language Processing (NLP) applied to text generation.

By generating fictional biographies of Venetians up to and including the 19th century, this model allows, on one hand, us to evaluate the current state, progress and limitation of text generation. Even more worthwhile, the question of whether underlying historical tendencies - most commonly under the form of international or inter-familial relationships - are learnt by the model can be explored.

Project Plan and Milestones

Milestone 1 (up to 1 October)

- Brainstorm project ideas

- Prepare presentation to present two ideas for the project

Milestone 2 (up to 14 November)

- Finalize project planning

- Build generative model, using Python with Tensorflow 1.x (to guarantee compatibility with pre-built GPT-2 and BERT models)

- Finetune GPT-II model

- Finetune Bert

- Connect both models to create generative pipeline

- Examine generated biographies, and acceptance threshold, and think about further improvements

Milestone 3 (up to 20 November)

- Create frontend for interface (leveraging Bootstrap and jQuery)

- Create backend for interface (using flask), and connect it to the generative module

- Finalize the RESTful API for communication between both ends

Milestone 4 (up to 5 December)

- Add post-processing date adjustment module

- Add historical realism enhancement module

- Assess feasibility and costs involved with outsourcing evaluation to a platform such as AMZ Mechanical Turk

Milestone 5 (up to 11 December)

- Have reached a decision with regards to evaluation procedure

- Conduct model evaluation

- Review code

- Finalize documentation

- Finalize report

- Results & Result analysis section

- Screenshot of interface

- Limitations

- Challenging parts ?

- Interface section

- Include link to repo

- Remove this TODO list !

Interface

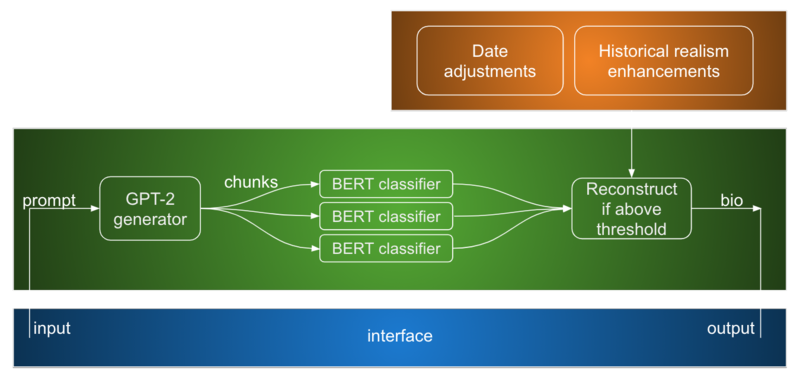

Generative module

Data processing pipeline

As a first step in the processing pipeline, we used the wikipediaapi [1] and wikipedia [2] packages to query articles from the Wikipedia category "People from Venice" [3].

The main task of this part of the data processing pipeline was to map Wikipedia HTML text to human-readable text, which includes eliminating section titles as well as removing references, lists, and tables. Detecting these proved to be a challenging task, and a few articles in the dataset still contain undesirable elements.

We chose to train the generative model with biographies of people having lived prior to the 20th century, as we would like the generated text to mainly reflect the historical aspects of Venetian figures.

The final dataset used for training comprises 1164 biographies. In further research, one could could include more biography sources in order to have a richer set of corpora, which would result in more diverse generated biographies.

Text generation

GPT2

The GPT-2 submodule is used for text generation. It is constituted of a GPT-2 [1] model which has been fine-tuned using Venetian biographies in order to be able to, given a prompt, generate a made up biography. This submodule hence plays the role of the "generator" in our GAN inspired module.

BERT

The BERT submodule is used for text assessment. It is constituted of a BERT model [2] which is trained to assess the realism of texts. Each biography is split into chunks before being fed to this module. It gives each chunk a realism score. The smallest realism score is used to determine whether the module should generate a new biography. This submodule serves as the "discriminator" in our GAN inspired module.

Enhancements module

Date Adjustments

The Date adjustments submodule is used in order to increase the realism of the generated biographies. This is done in several ways -

- The years are fetched using regex and then scaled in order to make sure that a biographee's lifespan is realistic.

- The centuries mentioned are fetched as well and are adjusted so that they match the years mentioned in the biography.

- Events (determined by verbs) are fetched and we search for "birth" and "death" events. Their corresponding dates are fetched and adjusted so that they are realistic.

Entity Replacement

The entity replacement submodule is used in order to increase historical realism. This is done by searching for historical figures in the generated biographies and checking whether they belong to the era indicated by the dates in the biography. If they don't, a replacement if fetched from a historical figures dataset [3] such that the replacement is as accurately close to the historical figure as possible (i.e. in terms of geography and occupation).

Quality assessment

To evaluate the generated biographies' realism, three types of biographies were prepared: real biographies of Venetians, which had been used for the model training, unadjusted fake biographies of Venetians, and adjusted fake biographies of Venetians. To create the fake biographies, it was necessary to temporarily abandon the semi-rigid prompt format we more commonly use and make available through the interface. Otherwise, the first sentence of each biography would have given away its real or fake nature. As such, the prompts used were instead the first sentence of wikipedia articles of other Italian people (for example, from Naples or Bologna), slightly changed so they were related to Venice instead of their true birthplace, when applicable.

In total, 160 biographies were read and evaluated, 80 of which were real biographies, 30 unadjusted fake biographies, and 50 adjusted fake biographies. More biographies were dedicated to the adjusted version since they are the true result of all modules, but 30 were still dedicated to the unadjusted biographies in order to provide a baseline results for just the GAN-inspired generative module's output.

Results

Evaluation metrics

Among the 160 evaluated biographies, out of which 80 were real and 80 were fake (either adjusted or not):

- Only 75.0% of the biographies which were classified as real were actually real. This means, the evaluators' precision was 0.75. (And 25.0% of biographies evaluated as real were actually fake!)

- Only 77.63% of fake biographies were correctly classified as such. The evaluators had a negative predictive value of 0.78. (And 22.37% of fake biographies were actually misclassified as real!)

- 78.75% of the real biographies were correctly classified as real; that is, the evaluators' recall, or sensitivity, was 0.79. (And 21.25% of them were actually misclassified as being fake.)

- 73.75% of biographies classified as fake were fake bios. The evaluators thus had a specificity, or true negative rate, of 0.74. (And 26.25% of biographies classified as fake were actually real biographies.)

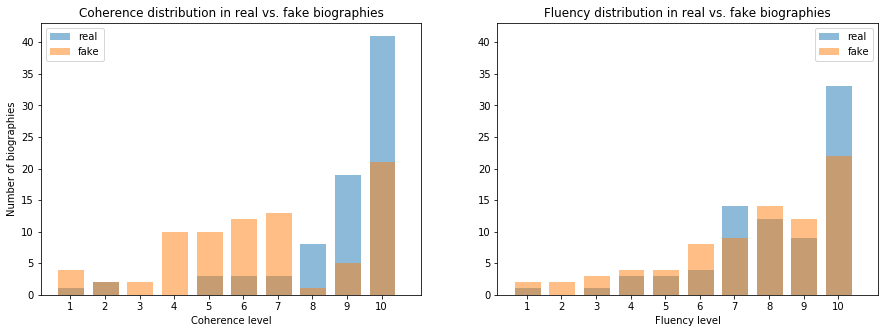

In terms of fluency and coherence, the average results are presented bellow. Fluency measured how naturally the text read and flowed, including grammar, while coherence measured the intra-biography coherence, in terms of dates, professions and achievements, references to other people, consistency in naming, etc.

- The average fluency for real biographies was 8.25, while for the fake biographies it was 7.525.

- The average coherence for real biographies was 8.8, while for the fake biographies it was 6.625.

The following histograms present a visualization of fluency and coherence over their 10 point scale for each type of biography:

Adjusted versus unadjusted biographies

Final qualitative assessment

Two examples of generated biographies

Below, we present two generated biographies, which were incorrectly evaluated as being real. The first was adjusted while the second was not.

Francesco Faà di Bruno (29 March 1625 – 27 March 1688) was an Italian priest and advocate of the poor, a leading mathematician of his era and a noted religious musician. [SEP] Born in Venice, Faà di Bruno studied mathematics at the University of Padova and then entered the Society of Jesus in 1639. He returned to Padova in 1645, where he was elected priest of the Dorsoduro, the Jesuits' highest office, and was ordained in 1648. In 1649 he was appointed as the Patriarch of Venice, a position he held until 1651.In 1658 he was appointed cardinal protonotary archpriest of Santa Maria in Trivio and then as Patriarch of Venice in 1664, again in 1667. He was born in Venice and died in Rome.

Leo Nucci (16 April 1642 - 24 September 1691) was an Italian operatic baritone, particularly associated with Verdi and Verismo roles. [SEP] He was born in Venice, and was one of the most notable pupils of the celebrated operatic singer Francesco Sacrati. He was born to a rich family, and operated a great deal of personal and professional capital in opera, music publishing, and the theatrical production of plays. In particular, he established a correspondence with the composer Carlo Goldoni, and with the musical director Luca Carlevaris, that would influence the musical practice of his generation. His output included libretti for musicals by Giulio Cesare Dell'Acqua, Carlo Gozzi, Carlo Bevilacqua, the composer Carlo Bernardi, the composer Giovanni Antonio Fetti, the pianist Leon Battista Gritti, the singer Giulia Cesare da Rimini, and the music director Luca Carlevari. Nucci also arranged for the music of Francesco Grassi and the music of Andrea Ricci, two of the most important music directors of the Venetian era. He was the first to do this in over a decade, and the first to do so in a major role. Nucci brought to the table the musical virtuoso talents of Goldoni, Ciera, Carlo Coccia, Luca Carlevaris, the composer Leon Battista Gritti, and the music director Luca Carlevari. He played a role in Francesco Grassi's debut production of Zatta er mazzarellike, the music for the La Fenice frescoes in the Accademia.

Links

References

- ↑ Radford, Alec and Wu, Jeff and Child, Rewon and Luan, David and Amodei, Dario and Sutskever, Ilya, Language Models are Unsupervised Multitask Learners, 2019

- ↑ Jacob Devlin, Ming-Wei Chang, Kenton Lee, Kristina Toutanova, BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding, 2019

- ↑ N/A, made available under a "Kaggle InClass" competition, Historical Figures