Terzani online museum

Introduction

Many inventions in human history have set the course for the future. One of them is a camera, which has transformed the way we share bondings and stories.

Storytelling is an essential part of the human journey. Starting from what one does when waking up in the morning to the explorations in the deep space, the connections are formed through stories. The tales were mostly transferred through sound until recently[1]. The invention of the camera[2] started to change the landscape of this narrative. People could capture live-action into images. Later, this leads to the invention of the video camera[3], color photos/videos[4] [5], and now we can nearly see the entire world in augmented reality[6] as if we are living the moment. However, many important events in history are captured as photographs for a long time now. To understand the then situations better, one would immensely gain by looking at the photographs if not videos. If not for research, it is always fascinating to visit history and appealing to see the pictures of past times. Nevertheless, having to go through an innumerable number of images is not productive. Thus, we want to create an online platform that would help access these photographs and travel through them.

Tiziano Terzani[7] was an Italian journalist and writer. He had extensively traveled in east Asia[8] and witnessed many important events. During the travel, he and his team have captured many pictures. The Cini foundation digitized some of his photo collections Terzani photo collections. Through this project, we try to bring the photographs taken during his trip to South Asian countries to an online platform and present them to the 21st-century digital audience.

Through this platform, the viewers can search the photos based on location or text search or by uploading an image to get similar images. Also, as an experimental feature, users can try to colorize the pictures.

Methods

Data Processing

Acquiring IIIF annotations

As the whole project is based on IIIF annotations of photographies, we must first collect them. The Terzani archive can be found on the Cini Foundation server[9]. However, it doesn't provide any specific API to download the IIIF manifests of all collections at once. Therefore we used the Python module Beautiful Soup to read the root page of the archive[10] and to extract all collection ID from there. Once we had collected these IDs, we could make a request to each corresponding IIIF manifest using urllib. We could then simply read this manifest and only keep the annotations whose label explicitely says that it represents the recto of the photography.

In our project, we're displaying photographies in a gallery sorted by country. The country information is however not present in the IIIF annotation. It is however available on the root page of the Terzani archive[11] : collections' names take after their origins. As these names are however not all formatted the same and written in Italian, we decided that it would be easier to map each collection to its country by hand rather doing it algorithmically. One problem occured for the collections whose name is made of multiple countries. It is impossible for us to know which photo is part of which of these countries. Thus we decided to not assign a country to any of these collections.

Annotating the photographies

Once in posession of the IIIF of the photographies, we annotated them using Google Cloud Vision[12]. This tool provides a Python API with a myriad of annotation features. For the scope of this project we decided to use the following :

- Object localization : detects which objects are on the image with their bounding box

- Text detection : OCR tool giving all text that could be read on the image alongside with their bounding box

- Label detection : gives general labels to the whole image

- Landmark detection : in the case where the image represents a famous landmark, gives the name of this place as well as its coordinates

- Web detection : if any similar photo is on the web, gives its reference alongside with a small description. We only make use of the description as an additional label for the whole image.

For each IIIF annotation, we first download its corresponding photo and then use the Google Vision API to get all these new information. However some of the values returned by Google Vision cannot be used as they are. We processed bounding boxes and all texts the following way :

- Bounding boxes : a bounding box is given by its 4 coordinates normalized between 0 and 1. To able to display the bounding box with the IIIF format, we need the non-normalized coordinates of its top left corner and its width and height. Luckily, the whole photo width and height is present in the IIIF annotation, which allows us to "de-normalize" the coordinates. The computation of the bouding box height and width is then only a matter of very simple algebra.

- Texts : Ravi ?

We then stored all of these information together in JSON format.

Photo feature vector

Database

As our data was currently stored as a JSON, it made sense to choose a database capable of handling it. Thus we decided to use a NoSQL database and picked MongoDB. Using the PyMongo module, we created three different collections on this database.

- Image Annotations : this is the main collection. Each object has a unique ID and contains a IIIF annotation and all the corresponding information gotten with Google Vision that has a bounding box (object localization, landmark and OCR).

- Image Feature Vectors : Simple mapping between object's IDs and its corresponding photo feature vector.

- Image Tags : This collection contains one object for each label and each bounded object detected by Google Vision. Each objects then contains a list of IDs of photos corresponding to this tag. This collection serves two purposes. First, it is a way to map photo labels to all corresponding IIIF annotation. Secondly, this collection is very useful when making a query for a bounded object. Indeed, if you did not have it and wanted to retrieve all images containing for example a car, you would have to scan every bounded object of the entierty of the "Image Annotations" collection. This solution wouldn't scale up at all, as one photo may have a dozen of bounded object. With this collection, you can very easily get all the corresponding object IDs and then query these IDs from the "Image Annotations" collection

Website

Back-end technologies

In order to create a Web Server that could easily handle the data in the same format we had used so far, it felt natural to choose a Python Framework. As the web application we wanted to build did not require complex features (e.g. authentication) we chose to use Flask which provides the essential tools to build a Web server.

The server is mostly responsible for processing the users' queries and to make the bridge between the client and the database. It also takes care of the computational heavy task of make the photographies colorisations that the users request.

Front-end technologies

To build our webpages, we make use of course of the traditional HTML5/CSS3. To make the website responsive on all kinds of devices and of screen sizes, we use Twitter's CSS framework Bootstrap. All programming on the client side are made in JavaScript with the help of the JQuery library. Finally, for an easy usage of data coming from the server, we use the Jinja2 templating language.

Gallery by country

To create the interactive map, we used the open-source JavaScript library Leaftlet[13]. To put in evidence the countries that Terzani visited, we used the feature that allows us to display GeoJSON on top of the map. We used GeoJSON maps[14] to construct such a file only containing the desired countries.

When the user clicks on a country, the client makes an AJAX request to the server asking for the first 21 photos of this country. In turn, the server queries the database to get the IIIF annotations of these 21 photos as well as the total number of photos from this country. When the client gets these information back, it uses the image links from the IIIF annotaitons to display them to the user. The total number of results for a given country serves to

compute the amount of pages required to display them all. To create the pagination, we use HTML <a> tags, which, on click, make an AJAX request to the server asking for the relevant IIIF annotations

Map of landmarks

When the user clicks on the Show landmarks button, an AJAX request is made to server asking for the name and geolocalisation of all landmarks in the database. With these information, we can create with the Leaflet library a marker for each unique landmark. Leaflet also allows us to create a customized pop-up when we click on such landmarks. These pop-ups contain simple HTML with a button which, on click, queries for the IIIF annotations of the corresponding landmark.

Search by Text

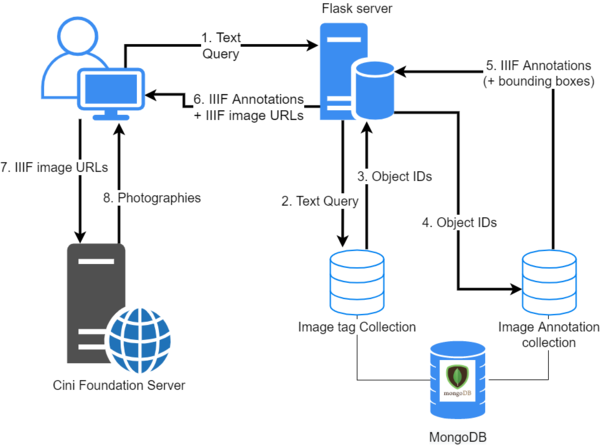

Querying photographies by text happens in multiple steps describe below. To get a clearer idea of where each step happens in the pipeline, each of them directly refer to the corresponding number of the schema on the right. These steps do not explain how the pagination is created as the process is the exact same as for the gallery.

- The user types his query on the website. The client makes a request containing the user input to the server.

- Upon receiving the user text query, the server .... **Ravi ?** . Then it queries the

Image Tagcollection to retrieve the IDs of 21 objects corresponding to the query. - The MongoDB database responds with the desired object IDs

- Upon receiving the object IDs, the server queries the

Image Annotationcollection to retrieve the IIIF annotation of these objects. If the user checked theOnly show bounding boxescheckbox, the server also asks for the bounding boxes information. - The MongoDB database responds with the desired IIIF annotations and the eventual bounding boxes.

- When the server gets the IIIF annotations, it performs some processing on the IIIF image links. If the user wanted to show the bounding boxes only, it creates a IIIF image URL such that each image is cropped around its bounding boxes. In any case, the IIIF image URL is also constructed so that the resulting image has the shape of a square. When the server is done with this processing, it sends all IIIF annotations as well as these IIIF image URLs to the client.

- Using Jinja2, the client creates an HTML

<img>for each Image URLs. The image data, hosted on the Cini Foundation server, are queried using the IIIF image URLs. - The Cini Foundation server answers with the images data and they can finally all be displayed to the user.

Search by Image

Quality assessment

We have successfully created a structured pipeline to perform the crucial steps for extracting the data and making it available for search engines. In further subsections, we provide the analysis and evaluation of the effectiveness of each step.

Data Harvesting

The first step in the pipeline is to obtain the photographs available on the Cini Foundation server. As the website did not provide an API to access the data, we have resorted to standard web scrapping techniques on the HTML page and create a binary file to store the IIIF annotation of the image. Although we have successfully extracted all the images present on the server, there is an amount of manual work that prevents making this an autonomous step. The other hurdle is the country information for each photograph for which we have manually annotated countries by going through the website. Thus not all images have a country associated with them as collections have multiple countries associated with them.

Text based Image search

The creation of tags to search images based on is one of the trickiest steps in the pipeline, as we have the least control over the process of creation. The Google Vision API has produced sufficiently reliable results for most of the photographs that have observable objects in recent past photography. However, in terms of text, the API itself has constraints on the languages that it can automatically. Thus, most of the detected text is the one that contains English alphabets. Nevertheless, for that text that is visible, we have results that are many times not evident to the human eyes. As we do not store any of the images on google cloud storage, the process itself cannot happen asynchronously and amounts to large amounts of lead time.

It is always strenuous for the user to search for exact words and find a match. Thus we resorted to using regular expressions for the search queries. Nevertheless, this comes with the problem of returning many search results that might not be relevant always. For instance, the search for a car or a cat can show images of carving or a cathedral.

<write user feedback on search results>

The results in the section of the website were widely acceptable.

Similar Image search

Alike the other search engine, the results in this section are also not measurable through a metric. The observation from the search results is that they are returned based on the structures present in the source image. These results are appropriate most of the time as the engine would return faces for faces, buildings for buildings, and cars for cars. Due to the constraint that most of the photographs are monochromatic, the colors in the source images do not significantly aid the search process.

Although the next issue does not directly concern the quality of the result produced, it would affect the user's interaction with the service. Currently, the search between the images happens sequentially. Parallelizing this would expedite the process.

<write user feedback on search results>

Code Realisation and Github Repository

The GitHub repository of the project is at Terzani Online Museum. There are two principal components of the project. The first one corresponds to the creation of a database of the images with their corresponding tags, bounding boxes of objects, landmarks and text identified, and their feature vectors. The functions related to these operations are inside the folder (package) terzani and the corresponding script in the scrpits folder. The second component is the website that is in the website directory. The details of installation and usage are available on the Github repository.

Limitations and Scope for Improvement

Schedule

| Timeframe | Tasks |

|---|---|

| Week 5-6 |

|

| Week 6-7 |

|

| Week 7-8 |

|

| Week 8-9 |

|

| Week 9-10 |

|

| Week 10-11 |

|

| Week 11-12 |

|

| Week 12-13 |

|

| Week 13-14 |

|